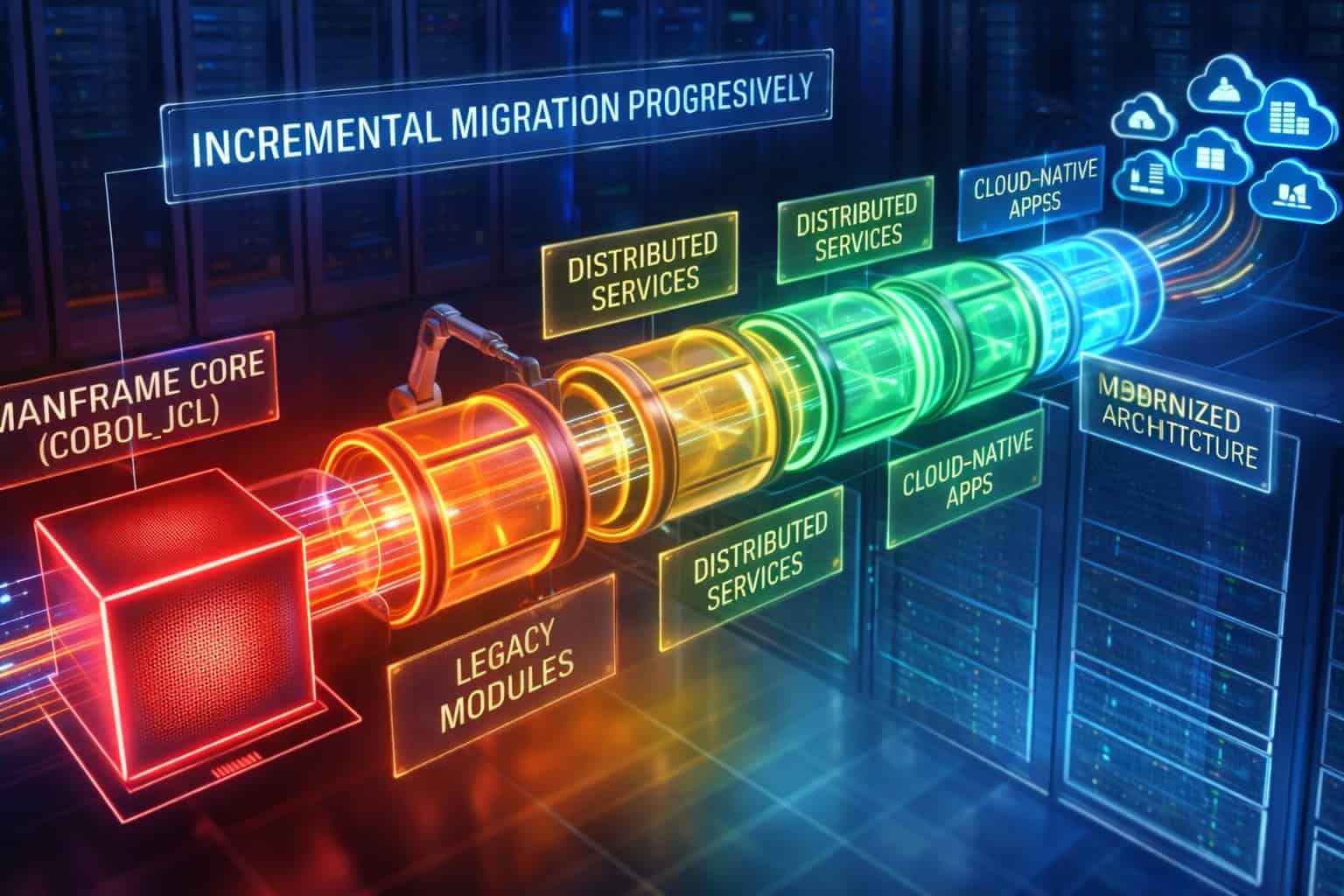

Incremental mainframe migration has become the dominant strategy for enterprises seeking to modernize without disrupting mission critical operations. Rather than attempting full rewrites or high risk cutovers, organizations increasingly pursue phased transformation across COBOL programs, JCL workflows, and distributed services. This approach reflects operational reality in large estates, where systems must continue to process transactions, settle batches, and meet regulatory obligations throughout the migration journey.

Despite its appeal, incremental migration introduces a unique class of technical complexity. COBOL logic, JCL orchestration, and distributed runtimes were rarely designed to evolve independently. Over decades, execution flow, data timing, and failure handling have become tightly intertwined across these layers. When migration initiatives attempt to extract or modernize one element at a time, hidden coupling surfaces in unexpected ways, slowing progress and increasing operational risk. These challenges are amplified in environments already struggling with legacy system modernization approaches, where documentation no longer reflects actual system behavior.

Control Migration Impact

Smart TS XL helps organizations preserve behavioral continuity while migrating legacy workloads incrementally.

Explore nowThe most difficult problems rarely appear at the level of individual programs or services. Instead, they emerge at the boundaries between batch and online processing, between scheduled execution and event driven flows, and between deterministic mainframe logic and distributed retry semantics. Incremental migration efforts often stall when these boundaries are crossed without a clear understanding of execution paths and data dependencies. What appears to be a contained change can propagate across platforms, forcing teams into prolonged stabilization cycles rather than steady transformation.

Successfully migrating across COBOL, JCL, and distributed services therefore depends on more than tooling or migration patterns. It requires a precise understanding of how systems execute today, how responsibilities are shared across components, and how behavior changes when parts of the system move independently. As enterprises pursue incremental modernization strategies, the ability to reason about execution continuity, data flow integrity, and failure semantics becomes the defining factor between controlled progress and stalled transformation.

Structural Coupling Between COBOL Programs and JCL Workflows

Incremental mainframe migration frequently underestimates the degree to which COBOL programs and JCL workflows are structurally inseparable. While they are often managed as distinct artifacts, their execution semantics have evolved together over decades. JCL does far more than schedule programs. It defines execution order, conditional branching, restart behavior, dataset lifecycles, and recovery semantics that COBOL code implicitly relies on. Treating these elements independently during migration introduces risk that is not immediately visible at the code level.

This coupling becomes particularly problematic when migration initiatives focus on extracting or modernizing COBOL logic without accounting for its operational context. The behavior of a program in isolation rarely matches its behavior within a production job stream. Incremental migration that ignores this relationship often leads to functional drift, inconsistent data states, and prolonged stabilization cycles that undermine the benefits of phased transformation.

JCL as an Execution Control Layer, Not Just Scheduling Logic

JCL is frequently mischaracterized as a scheduling or orchestration mechanism whose primary role is to invoke programs in sequence. In reality, JCL functions as an execution control layer that defines how and when COBOL programs run, under what conditions they branch, and how they respond to both success and failure states. Conditional statements, return code checks, and dataset disposition rules encode business and operational logic that is external to the program itself.

When COBOL programs are migrated incrementally without their associated JCL context, this control logic is often reimplemented implicitly or overlooked entirely. The result is behavior that diverges subtly from production norms. A program that appears functionally correct in isolation may execute under different conditions, process different data scopes, or fail to trigger downstream steps when expected.

This problem is exacerbated in environments where JCL has accumulated layered conditions over time. Emergency fixes, regulatory exceptions, and operational safeguards are frequently encoded directly into job streams rather than application logic. These constructs may only activate under specific circumstances, making them easy to miss during analysis. Without visibility into this control layer, migration teams risk stripping away behavior that is critical to production stability.

Understanding JCL as an execution control mechanism is therefore essential for safe incremental migration. It ensures that modernization efforts preserve not just functional outcomes, but the operational semantics that govern when and how those outcomes are produced.

Conditional Job Flows and Their Impact on Migration Boundaries

Conditional job flows represent one of the most significant barriers to clean migration boundaries. In many mainframe environments, execution paths diverge based on return codes, dataset availability, or external signals. These conditions determine which programs run, which steps are skipped, and how data is handled across a job stream.

Incremental migration efforts often assume linear execution models that do not reflect this reality. When a COBOL program is extracted or rehosted without accounting for conditional job flow, the migrated component may execute more frequently or under different circumstances than intended. This mismatch introduces data integrity risks and unpredictable operational behavior.

Conditional flows also complicate rollback and recovery. In traditional environments, JCL conditions define restart points and compensation behavior. When part of the flow is migrated and part remains on the mainframe, maintaining consistent restart semantics becomes challenging. Teams may discover that recovery procedures no longer align across platforms, increasing operational risk during incidents.

These issues highlight the importance of analyzing job flow structure before defining migration boundaries. Conditional execution paths must be identified and preserved to ensure behavioral continuity. This challenge is closely related to issues discussed in how to map JCL, where understanding program invocation context proves critical to accurate system understanding.

Dataset Lifecycles as Implicit Coupling Mechanisms

Beyond control flow, datasets form another layer of implicit coupling between COBOL programs and JCL workflows. JCL defines dataset creation, retention, sharing, and disposal rules that govern how data moves through a job stream. COBOL programs often assume these rules implicitly, relying on JCL to manage data availability and lifecycle.

During incremental migration, dataset handling is frequently reinterpreted or abstracted without fully replicating original semantics. Temporary datasets may become persistent, shared datasets may be duplicated, or cleanup logic may be altered. These changes can have cascading effects on downstream processing and data consistency.

The challenge is that dataset lifecycles are rarely documented in a centralized manner. They are encoded across multiple job steps and reinforced through operational conventions. Migration teams that focus solely on code-level analysis may miss these dependencies, leading to subtle but impactful deviations.

Preserving dataset semantics requires understanding how data flows through job streams and how lifecycle rules influence execution. Without this understanding, incremental migration risks introducing hidden data coupling issues that only surface under load or failure conditions.

Restart and Recovery Semantics Embedded in Job Design

Restart and recovery behavior in mainframe environments is often embedded directly into job design rather than application logic. JCL restart parameters, checkpointing conventions, and conditional rerun logic define how systems recover from partial failures. COBOL programs are written with these mechanisms in mind, assuming certain restart guarantees.

When migration efforts separate programs from their job context, these assumptions may no longer hold. A migrated component may lack equivalent restart semantics, forcing teams to redesign recovery procedures or accept increased risk. This redesign effort is frequently underestimated and becomes a source of delay in incremental migration programs.

Maintaining consistent recovery behavior across migration phases is critical for operational stability. It ensures that failure handling remains predictable even as components move across platforms. This concern connects closely with broader challenges in managing parallel run periods, where recovery consistency is a defining success factor.

Structural coupling between COBOL and JCL is therefore not an obstacle to migration, but a reality that must be addressed explicitly. Incremental migration succeeds when these relationships are understood, respected, and deliberately preserved across transformation phases.

Why Incremental Migration Breaks at the Batch and Online Boundary

The boundary between batch processing and online transaction systems is one of the most fragile points in incremental mainframe migration. Although batch and online workloads are often discussed as separate domains, in mature enterprise environments they operate as a tightly coordinated system. Batch jobs prepare, aggregate, and reconcile data that online systems consume in near real time. Incremental migration efforts that treat these domains independently frequently encounter instability when execution timing, data availability, or failure handling diverges.

This fragility is amplified in hybrid architectures where parts of the batch pipeline remain on the mainframe while online services are incrementally moved to distributed platforms. The assumptions that governed batch online coordination for decades no longer hold once execution spans multiple runtimes. Without precise understanding of how batch outputs align with online expectations, migration initiatives stall at this boundary, not because of technical impossibility, but because of behavioral uncertainty.

Temporal Dependencies Between Batch Completion and Online Availability

One of the most underestimated challenges in incremental migration is the presence of temporal dependencies between batch execution and online system availability. Many online applications assume that specific batch cycles have completed successfully before transactions are processed. These assumptions are rarely enforced through explicit synchronization mechanisms. Instead, they are embedded in operational schedules, cutoff times, and informal runbooks.

When batch workloads are migrated incrementally, execution timing often changes. Distributed batch frameworks may execute faster, slower, or with different retry semantics compared to their mainframe counterparts. Even minor shifts in completion timing can expose online systems to partially prepared data sets, leading to inconsistent behavior that is difficult to diagnose.

These timing issues are particularly problematic during phased migration, where some batch steps execute on the mainframe while others execute on distributed platforms. Online systems may observe mixed states that never existed in the original environment. Recovery procedures that once relied on predictable batch windows become unreliable, increasing operational risk.

Understanding and preserving temporal dependencies is essential for maintaining stability across the batch online boundary. Without explicit modeling of these relationships, incremental migration introduces subtle race conditions that only surface under load or failure scenarios.

Data Consistency Expectations Embedded in Online Logic

Online applications often encode implicit assumptions about data consistency that originate from batch processing behavior. For example, online transactions may assume that reference tables are fully refreshed, balances are reconciled, or aggregations are complete before user activity begins. These assumptions are rarely validated dynamically, as they were historically guaranteed by batch execution order.

Incremental migration disrupts these guarantees. When batch steps are relocated or reimplemented, the consistency model may change. Distributed systems may expose intermediate states that were previously hidden, or apply eventual consistency where strong consistency was assumed. Online logic that was never designed to handle such states begins to exhibit unpredictable behavior.

This mismatch creates a feedback loop that complicates migration. Online failures trigger investigation into batch processes, while batch changes are constrained by online stability requirements. Migration teams find themselves unable to progress without freezing one side of the boundary, undermining the incremental approach.

Addressing this challenge requires making data consistency assumptions explicit. Migration efforts must identify which batch outputs are critical for online correctness and ensure equivalent guarantees are preserved. This issue aligns closely with challenges discussed in incremental data migration strategies, where partial data movement introduces consistency risk.

Failure Propagation Across Batch and Online Domains

Failures that cross the batch online boundary are particularly difficult to isolate during incremental migration. A batch failure may manifest hours later as an online issue, or an online overload may cause batch delays due to shared resources. In hybrid environments, these interactions become harder to trace as components span platforms.

Incremental migration increases the number of failure paths by introducing new integration points and execution contexts. A failure in a migrated batch step may propagate differently than in the original environment, triggering online symptoms that do not match historical patterns. Recovery teams struggle to determine whether issues originate in migrated components or legacy ones, slowing resolution.

The lack of unified execution visibility across batch and online domains exacerbates this problem. Monitoring tools often focus on one domain or the other, leaving gaps at the boundary. During incidents, teams must correlate signals manually, increasing MTTR and recovery variance.

Understanding failure propagation requires analyzing how batch and online systems interact under both normal and exceptional conditions. Without this analysis, incremental migration introduces new operational blind spots that hinder stability.

Incremental Cutover Complexity at the Batch Online Interface

The act of cutting over functionality incrementally at the batch online boundary introduces its own complexity. Migration plans often assume that components can be switched independently. In practice, batch and online systems must be cut over in coordinated phases to preserve behavioral integrity.

Partial cutovers create hybrid execution paths where some transactions rely on migrated batch outputs while others depend on legacy processing. These mixed states are difficult to test comprehensively and often reveal issues only in production. Rollback procedures become complicated, as reversing one side of the boundary may not restore original behavior.

This complexity forces organizations to adopt conservative cutover strategies that slow migration progress. Teams delay transitions until they are confident that all interactions are understood, reducing the agility benefits of incremental migration.

Addressing cutover complexity requires precise knowledge of batch online interactions and their dependencies. Insights similar to those described in batch workload modernization challenges highlight the need for careful sequencing and impact awareness.

Incremental migration succeeds at the batch online boundary when execution timing, data consistency, failure propagation, and cutover sequencing are understood and managed as a cohesive system rather than isolated concerns.

Managing Execution Path Continuity During COBOL Extraction

Incremental COBOL extraction is often presented as a code-centric exercise, yet its true complexity lies in preserving execution path continuity as components move across platforms. COBOL programs rarely operate as isolated units. Their behavior is shaped by invocation context, upstream data preparation, downstream consumption, and environmental conditions that collectively define how execution unfolds in production. When extraction efforts focus narrowly on program logic, these contextual factors are easily lost.

Execution path continuity is critical because it determines whether migrated components behave consistently with their legacy counterparts. Even small deviations in control flow, invocation timing, or data handling can introduce subtle behavioral drift. In large enterprises, such drift accumulates across migration phases, leading to unpredictable system behavior that slows progress and erodes confidence in the incremental approach.

Preserving Conditional Logic Fidelity Across Migration Phases

Conditional logic embedded in COBOL programs often reflects decades of business exceptions, regulatory accommodations, and operational safeguards. These conditions may depend on data values, execution context, or external signals that are not immediately obvious during extraction. Preserving their fidelity is essential for maintaining execution continuity.

During incremental migration, conditional logic is frequently reinterpreted or refactored to align with new platforms or frameworks. While such refactoring may improve readability or performance, it risks altering execution behavior if not grounded in a deep understanding of original conditions. Logic that was designed to execute only under rare circumstances may become more frequent, or vice versa, changing system outcomes.

This risk is amplified when conditional behavior spans multiple programs. A condition evaluated in one COBOL module may influence downstream execution paths indirectly through data changes or return codes. Extracting a single program without modeling these interactions can break implicit contracts that govern execution flow.

Managing this challenge requires identifying conditional logic not just within programs, but across execution paths. Teams must understand when conditions activate, how often they occur, and what downstream effects they trigger. Without this understanding, incremental extraction introduces behavioral divergence that is difficult to detect through testing alone.

Invocation Context Shifts and Their Hidden Effects

COBOL programs are sensitive to how they are invoked. Parameters, execution environment, and calling context influence program behavior in ways that are often undocumented. Incremental extraction frequently changes invocation mechanisms, replacing JCL driven execution with service calls, schedulers, or distributed job frameworks.

These changes can subtly alter execution paths. Parameters may be passed differently, default values may change, and environmental assumptions may no longer hold. For example, a program that relied on implicit dataset allocation performed by JCL may encounter missing resources when invoked in a new context.

Invocation context shifts also affect error handling and restart behavior. Programs may respond differently to failures depending on how they are called, influencing recovery semantics. These differences may not surface until production incidents occur, at which point rollback becomes costly.

Understanding invocation context is therefore a prerequisite for safe extraction. Teams must map how programs are called today, what assumptions they make, and how those assumptions translate in the target environment. This concern is closely related to challenges described in program usage discovery techniques, where execution context determines actual system behavior.

Execution Order Dependencies Between Extracted and Remaining Components

Incremental extraction creates mixed execution environments where some components have migrated while others remain on the mainframe. In such environments, execution order dependencies become a critical concern. COBOL programs often assume that certain upstream steps have completed and that downstream consumers will execute in a predictable sequence.

When parts of the execution chain move independently, these assumptions may no longer hold. Distributed systems may introduce parallelism or different scheduling semantics that disrupt established order. Programs that once executed sequentially may now run concurrently, exposing race conditions or data contention issues.

These order dependencies are rarely documented explicitly. They are enforced through scheduling conventions and operational discipline rather than technical constraints. Incremental migration must therefore surface and preserve these dependencies to maintain execution continuity.

Failure to do so results in intermittent issues that are difficult to reproduce. Systems may appear stable under light load but fail under peak conditions when execution order diverges. Such failures undermine confidence in migration progress and force teams to pause or reverse changes.

Behavioral Drift as a Cumulative Migration Risk

Behavioral drift refers to the gradual divergence between legacy and migrated system behavior that occurs over successive migration phases. Each extraction may introduce small changes that appear acceptable in isolation but accumulate into significant differences over time.

This drift is particularly dangerous because it often escapes detection during testing. Tests typically validate functional outcomes for specific scenarios, not the full spectrum of execution paths. As a result, drift may only surface during rare conditions or edge cases.

Managing behavioral drift requires continuous validation of execution continuity. Teams must compare not only outputs, but execution paths and decision points across environments. This comparison helps identify where behavior is changing and whether those changes are intentional.

Execution path analysis plays a critical role in this process. By understanding how code paths evolve as components migrate, organizations can control drift and maintain confidence in incremental progress. Without such control, migration efforts risk becoming irreversible experiments rather than predictable transformations.

Incremental COBOL extraction succeeds when execution continuity is treated as a first class concern. Preserving how systems behave, not just what they compute, ensures that migration advances without compromising stability or trust.

Distributed Service Integration as the Primary Migration Risk Multiplier

Distributed services are often introduced into mainframe environments as part of modernization initiatives intended to increase flexibility and scalability. While these services enable incremental migration, they also act as significant risk multipliers when not aligned carefully with existing execution models. COBOL programs and JCL workflows were designed around deterministic execution and tightly controlled data movement. Distributed services, by contrast, operate under fundamentally different assumptions.

As incremental migration progresses, the coexistence of deterministic mainframe logic and asynchronous distributed services creates behavioral tension. Integration points become areas where execution timing, failure handling, and data consistency semantics diverge. Without deliberate control, these divergences amplify operational risk and slow migration progress, particularly when services are introduced gradually alongside legacy components.

Asynchronous Communication Versus Deterministic Batch Execution

One of the most pronounced contrasts between distributed services and mainframe workloads lies in communication models. Mainframe batch processing follows deterministic execution sequences where steps run in predefined order and completion states are known. Distributed services frequently rely on asynchronous messaging, where execution order is not guaranteed and responses may be delayed or retried.

When asynchronous services are integrated incrementally, assumptions embedded in batch workflows may no longer hold. A COBOL program may expect a downstream process to complete before the next job step executes, while a distributed service may process requests independently. This mismatch can lead to partial updates, data races, or stalled workflows.

Incremental migration complicates this further by introducing hybrid execution chains. Some steps remain deterministic while others become asynchronous, creating execution paths that were never present in the original system. Recovery procedures designed for deterministic flows may fail to account for in flight messages or delayed processing, increasing operational uncertainty.

Understanding how asynchronous communication interacts with batch execution is critical for safe migration. Without this understanding, distributed services introduce nondeterminism that undermines the predictability of legacy workflows.

Retry Semantics and Their Impact on Legacy Assumptions

Distributed services commonly implement retry mechanisms to improve resilience. Requests may be retried automatically in response to transient failures, timeouts, or network issues. While effective in modern systems, these retries can violate assumptions held by legacy components.

COBOL programs and JCL workflows typically assume single execution per invocation. When a distributed service retries an operation that triggers mainframe processing, the result may be duplicate updates or inconsistent state. These issues are difficult to detect during testing because retries occur under failure conditions that are not always simulated.

Incremental migration increases exposure to this risk as new services are introduced alongside legacy logic. Teams may not realize that a migrated component is now subject to retry behavior that did not exist previously. Over time, this can lead to data anomalies that erode trust in the migration.

Managing retry semantics requires explicit coordination between distributed and mainframe components. Legacy systems must be protected from unintended re execution, either through idempotency controls or integration design. Without such measures, retries become a silent risk multiplier.

Schema Drift and Contract Evolution Challenges

Data contracts between systems are rarely static, especially in incremental migration scenarios. Distributed services evolve rapidly, often introducing schema changes that reflect new requirements. Legacy systems, however, are less adaptable and may depend on fixed record layouts.

Schema drift occurs when distributed services and mainframe components fall out of alignment. A field added or reinterpreted in a service may not be recognized by a COBOL program, leading to parsing errors or incorrect processing. During incremental migration, these issues may appear sporadically as services evolve independently.

The challenge is compounded by the lack of explicit contract enforcement across platforms. Distributed services may rely on flexible serialization formats, while mainframe programs expect strict layouts. Without rigorous coordination, schema changes propagate unpredictably.

This problem is closely related to challenges discussed in handling data encoding mismatches, where subtle differences in data representation disrupt integration. In incremental migration, schema drift must be actively managed to prevent integration failures.

Latency Amplification and Failure Propagation

Distributed services introduce network latency and partial failure modes that are foreign to traditional mainframe processing. While mainframe components are designed for high throughput and low latency within a controlled environment, distributed integrations introduce variability.

Latency amplification occurs when delays in distributed services cascade through execution chains. A slow response from a service may block batch progression or degrade online performance. Incremental migration exposes systems to these effects gradually, making them difficult to anticipate.

Failure propagation also becomes more complex. A transient service failure may ripple into batch delays, online transaction errors, or inconsistent data states. Recovery procedures must account for these interactions, yet they are often designed with single platform assumptions.

Incremental migration succeeds when distributed services are integrated with full awareness of their impact on legacy execution semantics. Without this awareness, each new service increases the complexity and risk of the migration effort.

Distributed service integration is therefore not merely a technical detail but a central determinant of incremental migration success. Controlling its impact is essential for maintaining stability while modernizing across platforms.

Incremental Migration Without Full System Freezes or Parallel Runs

One of the strongest drivers behind incremental mainframe migration is the need to modernize without interrupting production operations. Large enterprises rarely have the option to freeze systems for extended periods or to run full parallel environments indefinitely. Business cycles, regulatory obligations, and customer demand require continuous availability, even as core systems evolve.

However, avoiding system freezes and long parallel runs introduces its own set of technical challenges. Incremental migration must balance forward progress with operational continuity, ensuring that changes can be introduced, validated, and, if necessary, reversed without destabilizing production. Achieving this balance requires careful control of execution scope, clear rollback boundaries, and an understanding of how coexistence affects system behavior over time.

Defining Safe Migration Increments That Limit Operational Exposure

Incremental migration succeeds when each migration step represents a bounded and controllable change. Defining such increments is far more complex than selecting individual programs or services to migrate. Safe increments must account for execution dependencies, data ownership, and failure semantics that extend beyond code boundaries.

In practice, unsafe increments often arise when migration scope is defined purely by technical convenience. Extracting a COBOL program because it appears self contained may ignore its role in a larger execution chain. When such a program is migrated, operational exposure increases because downstream systems may behave differently under load or failure conditions.

Safe increments are defined by limiting the operational blast radius of change. This means ensuring that migrated components can fail independently without forcing broad recovery actions. Achieving this requires understanding which components share execution paths, which changes introduce new dependencies, and where rollback boundaries exist.

Without this discipline, incremental migration becomes risky experimentation rather than controlled transformation. Teams may be forced to pause migration or introduce ad hoc parallelism to stabilize systems, negating the intended benefits of incremental progress.

Avoiding Long Term Parallel Execution Models

Parallel execution is often used as a risk mitigation strategy during migration. Running legacy and migrated components side by side allows teams to compare behavior and validate correctness. While effective in the short term, long term parallelism introduces operational complexity that can outweigh its benefits.

Maintaining parallel environments requires duplicating data flows, synchronizing state, and reconciling differences between systems. Over time, these activities consume significant operational resources and introduce new failure modes. Parallel systems may drift out of alignment, making comparisons unreliable and increasing recovery complexity during incidents.

Incremental migration aims to minimize reliance on long term parallelism by enabling confident cutovers. This confidence comes from understanding execution behavior and impact before changes are introduced. When teams know how systems will behave after migration, parallel runs can be limited to targeted validation rather than prolonged coexistence.

The challenge lies in determining when parallelism is truly necessary and when it can be safely eliminated. Without clear criteria, organizations default to extended parallel operation, slowing migration and increasing cost.

Designing Rollback Boundaries That Preserve Stability

Rollback capability is essential for incremental migration without freezes. When changes are introduced into production, teams must be able to revert quickly if unexpected behavior emerges. Designing effective rollback boundaries requires more than version control. It requires architectural consideration of state, data, and execution flow.

In mainframe environments, rollback often relies on well understood job restart and recovery mechanisms. As components migrate, these mechanisms may no longer apply directly. Distributed systems may handle rollback differently, relying on compensating actions rather than deterministic restarts.

Incremental migration must reconcile these approaches. Rollback boundaries should be defined such that reverting a migrated component does not leave the system in an inconsistent state. This often requires isolating data changes or ensuring idempotent behavior across boundaries.

Failure to design robust rollback boundaries leads to cautious deployment practices that slow migration. Teams hesitate to introduce changes without extensive testing, increasing time to value. Clear rollback strategies enable more frequent, confident migration steps.

Continuous Operation Under Migration Induced Change

Maintaining continuous operation while migrating requires systems to tolerate ongoing change. Load patterns, execution timing, and resource utilization may shift as components move across platforms. These shifts can expose latent performance or contention issues.

Incremental migration must therefore account for operational dynamics, not just functional correctness. Changes that are safe under nominal load may cause degradation under peak conditions. Without careful monitoring and analysis, such issues may only surface after migration steps are completed, complicating remediation.

This challenge connects closely to concerns discussed in continuous integration mainframe refactoring, where frequent change requires disciplined integration practices. In migration contexts, similar discipline is required to ensure stability.

Continuous operation under change demands that migration steps be observable, reversible, and isolated. When these conditions are met, incremental migration can proceed without freezes or extended parallelism. When they are not, organizations are forced into conservative strategies that undermine the agility benefits of incremental transformation.

Incremental migration without system freezes is achievable, but only when operational realities are treated as first class constraints. By defining safe increments, limiting parallelism, designing rollback boundaries, and accounting for continuous operation, organizations can modernize steadily without sacrificing stability.

Smart TS XL and Deterministic Insight for Incremental Mainframe Migration

Incremental mainframe migration across COBOL, JCL, and distributed services succeeds or fails based on the quality of system understanding available before changes are introduced. In environments where execution behavior, dependencies, and data flows are only partially understood, migration decisions rely heavily on assumptions. These assumptions accumulate risk across phases, forcing teams to slow progress or introduce compensating controls that undermine the incremental model.

Smart TS XL addresses this challenge by providing deterministic system insight derived from static and impact analysis rather than runtime observation. Its role in incremental migration is not to automate transformation, but to reduce uncertainty by making execution paths, dependencies, and cross platform interactions explicit. This clarity enables migration teams to plan phased extraction and integration with confidence, even in deeply entangled legacy estates.

Precomputed Execution Intelligence Across COBOL and JCL

One of the primary contributions of Smart TS XL to incremental migration is its ability to surface execution intelligence across COBOL programs and their surrounding JCL workflows. Rather than treating programs and job streams as separate artifacts, Smart TS XL analyzes how they interact to produce actual execution behavior in production.

This precomputed intelligence reveals which programs execute under which conditions, how job steps branch, and where restart and recovery logic influences control flow. For migration teams, this information is critical when defining extraction boundaries. It ensures that programs are not migrated in isolation from the execution context that shapes their behavior.

By understanding execution structure in advance, teams can identify safe migration candidates and avoid components whose behavior is tightly coupled to complex job logic. This reduces the likelihood of behavioral drift and minimizes stabilization effort after migration steps are completed.

Execution intelligence also supports more accurate testing strategies. Rather than relying solely on functional tests, teams can validate that migrated components preserve execution paths observed in the legacy environment. This validation reduces the risk of subtle deviations that only surface under rare conditions.

Dependency Transparency Across Mainframe and Distributed Services

Incremental migration introduces hybrid execution environments where mainframe and distributed components coexist for extended periods. In such environments, dependency transparency becomes essential. Without clear visibility into how components interact across platforms, migration decisions are constrained by uncertainty.

Smart TS XL provides dependency insight that spans languages, runtimes, and execution models. It exposes relationships that are not visible through interface definitions alone, such as shared data usage, indirect invocation paths, and conditional dependencies. This transparency enables teams to reason about the impact of migrating a component beyond its immediate scope.

For example, migrating a COBOL program may appear low risk until dependency analysis reveals downstream consumers in distributed services that rely on specific data states or timing. With this insight, teams can adjust migration sequencing or introduce safeguards to preserve stability.

Dependency transparency also reduces the need for prolonged parallel runs. When teams understand dependency structure, they can predict how changes will propagate and plan cutovers accordingly. This capability supports incremental migration without excessive operational overhead.

This approach aligns with principles discussed in static and impact analysis, where understanding relationships enables safer change. In migration contexts, the same principle enables safer phased transformation.

Supporting Phased Extraction Without Behavioral Guesswork

One of the most persistent challenges in incremental migration is behavioral guesswork. Teams often proceed based on incomplete knowledge, relying on post migration monitoring to detect issues. This reactive approach increases risk and slows progress.

Smart TS XL reduces guesswork by enabling teams to model migration scenarios before execution. By understanding execution paths and dependencies, teams can predict how behavior will change when components move. This prediction allows for proactive mitigation rather than reactive correction.

Phased extraction becomes a controlled process rather than an experiment. Teams can identify which behaviors must be preserved, which can change safely, and which require redesign. This clarity supports steady progress without repeated rollback cycles.

Behavioral insight also improves communication across teams. When migration decisions are grounded in shared understanding, coordination between mainframe and distributed teams becomes more effective. This alignment reduces friction and accelerates migration timelines.

Enabling Incremental Migration as an Engineering Discipline

Ultimately, Smart TS XL supports the transformation of incremental mainframe migration from an ad hoc effort into an engineering discipline. By providing consistent, deterministic insight into system behavior, it enables teams to apply repeatable practices across migration phases.

This discipline manifests in clearer migration plans, more predictable outcomes, and reduced variance in stabilization effort. Migration steps become smaller, safer, and easier to evaluate. Over time, organizations gain confidence in their ability to modernize without jeopardizing production stability.

Smart TS XL does not replace architectural judgment or domain expertise. Instead, it amplifies their effectiveness by grounding decisions in evidence rather than intuition. In complex hybrid environments, this grounding is essential for sustaining momentum across long running migration programs.

By reducing uncertainty and exposing system structure, Smart TS XL enables incremental mainframe migration to progress with confidence, control, and continuity.

From Incremental Experiments to Predictable Mainframe Transformation

Many incremental mainframe migration initiatives begin as controlled experiments. A small subset of programs is migrated, a limited integration is introduced, or a specific workload is modernized to validate feasibility. While these experiments often succeed technically, they frequently fail to scale. What works for an isolated component does not automatically translate into a repeatable transformation approach across a full estate.

Predictable mainframe transformation emerges when incremental migration evolves from experimentation into a disciplined engineering practice. This shift requires consistency in how migration decisions are made, how outcomes are evaluated, and how lessons are applied across phases. Without this discipline, organizations remain trapped in pilot mode, unable to accelerate progress without increasing risk.

Standardizing Migration Decisions Across Heterogeneous Systems

One of the key challenges in scaling incremental migration is the lack of standardized decision criteria. Each migration step is often evaluated independently, based on local knowledge or immediate constraints. While this flexibility supports early experimentation, it introduces inconsistency as the scope expands.

In heterogeneous environments, COBOL programs, JCL workflows, and distributed services differ widely in complexity and criticality. Without a common framework for assessing migration readiness, teams make decisions that are difficult to compare or reproduce. One team may migrate aggressively, while another adopts a conservative approach, leading to uneven progress.

Standardization does not imply rigid rules. Instead, it involves defining shared evaluation dimensions such as dependency density, execution path complexity, and failure impact. When these dimensions are applied consistently, migration decisions become comparable across systems.

This consistency reduces internal friction and improves planning accuracy. Stakeholders gain clearer visibility into migration risk and effort, enabling more realistic timelines. Over time, standardized decision making transforms incremental migration from a series of isolated bets into a coordinated transformation program.

Turning Stabilization Effort Into Actionable Feedback

Early migration phases often require significant stabilization effort. Issues are discovered, workarounds are applied, and systems are tuned to restore acceptable behavior. In many organizations, this effort is treated as a temporary cost rather than a source of insight.

When stabilization outcomes are not captured systematically, teams repeat the same mistakes in subsequent phases. Similar issues reappear, consuming time and eroding confidence. Incremental migration stalls because each step feels as risky as the first.

Predictable transformation requires converting stabilization effort into actionable feedback. Teams must analyze why issues occurred, which assumptions proved invalid, and how future migrations can avoid similar problems. This feedback loop turns operational pain into engineering knowledge.

Over time, this process reduces stabilization effort per migration step. As patterns are identified and addressed proactively, migration becomes smoother and more predictable. Organizations that invest in learning from early phases accelerate later ones without increasing risk.

Aligning Teams Around Shared Execution Understanding

Incremental migration spans organizational boundaries. Mainframe specialists, distributed system engineers, operations teams, and business stakeholders all contribute to success. Misalignment between these groups is a common source of friction and delay.

Shared execution understanding provides a foundation for alignment. When teams agree on how systems behave today and how they are expected to behave after migration, coordination improves. Decisions are based on shared models rather than conflicting mental representations.

This alignment reduces handoff delays and minimizes rework. Teams can collaborate more effectively because they operate from the same understanding of dependencies and execution flow. As a result, migration steps progress more smoothly.

Alignment also improves communication with non technical stakeholders. When migration outcomes are explained in terms of execution continuity and risk reduction, expectations become clearer. This clarity supports sustained investment and commitment to long running transformation programs.

Building Confidence Through Repetition and Predictability

Confidence is a critical enabler of large scale migration. Early successes may generate enthusiasm, but confidence is sustained only when outcomes remain predictable over time. Organizations lose momentum when each migration step feels uncertain, regardless of prior experience.

Predictability builds confidence by reducing surprises. When teams can anticipate challenges and manage them consistently, migration becomes less stressful and more routine. This shift changes organizational behavior. Teams become more willing to tackle complex components and less inclined to defer difficult decisions indefinitely.

Repetition reinforces this confidence. As migration steps follow familiar patterns, teams refine their approach and improve efficiency. The transformation gains momentum, moving beyond experimentation into execution.

This evolution reflects the broader principles discussed in incremental modernization strategies, where predictability enables scale. Incremental mainframe migration reaches its full potential when it becomes a repeatable engineering practice rather than a series of isolated experiments.

By standardizing decisions, learning from stabilization, aligning teams, and building confidence through repetition, organizations transform incremental migration into a predictable path forward. This transformation enables sustained modernization without sacrificing the stability that mission critical systems demand.

Data Flow Fragmentation During Incremental COBOL and JCL Migration

Data flow fragmentation is one of the least visible yet most disruptive challenges in incremental mainframe migration. As COBOL programs and JCL workflows are migrated in phases, data ownership and processing responsibilities are often split across platforms. While this fragmentation may appear manageable at a structural level, it introduces behavioral complexity that undermines stability if left unaddressed.

In legacy environments, data flows evolved alongside execution logic. Batch cycles, dataset lifecycles, and program sequencing collectively ensured that data was produced, transformed, and consumed in predictable patterns. Incremental migration disrupts these patterns by introducing new execution contexts and partial ownership models. Without explicit control, fragmented data flows become a source of inconsistency that slows migration and increases operational risk.

Partial Data Ownership Across Platforms

Incremental migration frequently results in partial data ownership, where some records are produced or updated by migrated components while others remain under legacy control. This split ownership complicates assumptions that were previously implicit. COBOL programs and JCL workflows often assume exclusive access to datasets during specific windows, an assumption that no longer holds when distributed services are introduced.

Partial ownership creates ambiguity around which system is authoritative for specific data elements at any given time. During normal operations, this ambiguity may remain hidden. Under failure conditions or during reconciliation cycles, inconsistencies surface, requiring manual intervention to resolve discrepancies.

This challenge is amplified when ownership boundaries are not aligned with business semantics. Migrating a technical component without migrating its associated data domain leads to situations where logic and data responsibilities are misaligned. Teams must then coordinate across platforms to ensure consistency, increasing operational overhead.

Effective incremental migration requires making data ownership explicit and aligning it with migration phases. Without this alignment, data flow fragmentation introduces subtle errors that undermine confidence in migration outcomes.

Temporal Fragmentation in Batch Driven Data Pipelines

Batch driven data pipelines rely heavily on temporal coordination. Data is expected to be complete, consistent, and available at specific points in time. Incremental migration disrupts this coordination by altering execution timing and introducing new processing stages.

When parts of a batch pipeline are migrated, execution duration may change. Distributed processing frameworks may complete faster or slower than mainframe jobs, shifting data availability windows. Downstream processes that rely on specific timing assumptions may encounter incomplete or stale data.

Temporal fragmentation is particularly difficult to diagnose because it often manifests intermittently. Under normal conditions, timing differences may be negligible. Under peak load or failure recovery scenarios, delays accumulate and expose hidden dependencies.

Addressing temporal fragmentation requires understanding not just data dependencies, but timing dependencies. Migration teams must identify where timing assumptions exist and ensure they are preserved or adapted explicitly. Without this effort, incremental migration introduces race conditions that compromise data integrity.

Data Duplication and Divergence Risks

To mitigate risk, organizations sometimes duplicate data during incremental migration. Legacy systems continue to produce datasets while migrated components maintain parallel copies. While duplication can provide short term safety, it introduces long term divergence risk.

Maintaining consistency between duplicated datasets requires synchronization mechanisms that are often complex and fragile. Minor delays or failures can cause data sets to drift apart, leading to reconciliation challenges and loss of trust in data accuracy.

Divergence risk increases as migration phases accumulate. Each new component added to the hybrid environment increases the number of synchronization points. Over time, managing these points becomes a significant operational burden.

This issue connects closely to challenges described in incremental data migration planning, where partial data movement must be carefully controlled. Incremental migration benefits when data duplication is minimized and ownership transitions are clearly defined.

Restoring End to End Data Flow Visibility

Fragmented data flows undermine visibility into how data moves through the system. In legacy environments, experienced teams could reason about data lineage based on job schedules and program sequences. Incremental migration obscures this lineage by distributing processing across platforms.

Without end to end visibility, diagnosing data issues becomes time consuming and error prone. Teams must trace data manually across systems, increasing MTTR during incidents and slowing migration progress.

Restoring visibility requires mapping data flows across both legacy and migrated components. This mapping enables teams to understand where data originates, how it is transformed, and where it is consumed. With this understanding, inconsistencies can be identified and resolved more efficiently.

Data flow visibility also supports better migration planning. When teams understand how data flows evolve across phases, they can design migration steps that minimize fragmentation. Over time, this approach reduces complexity and stabilizes operations.

Data flow fragmentation is not an inevitable consequence of incremental migration, but it is a common one. Addressing it proactively is essential for maintaining consistency, trust, and momentum as COBOL and JCL workloads evolve across platforms.

Preserving Failure Semantics Across Incremental Migration Phases

Failure semantics define how systems behave when something goes wrong. In legacy mainframe environments, these semantics are deeply embedded in execution flow, job control, and operational procedures. Restart points, error codes, conditional branching, and recovery logic collectively determine how failures are detected, contained, and resolved. Incremental migration introduces risk when these semantics are altered unintentionally.

Preserving failure semantics across migration phases is essential for operational stability. Even when functional behavior appears unchanged, differences in how failures propagate or are handled can lead to unpredictable outcomes. Incremental migration must therefore treat failure behavior as a first class concern, ensuring continuity not only in success paths but also in error scenarios.

Restart and Recovery Logic Embedded Outside Application Code

In mainframe environments, restart and recovery logic is often distributed across JCL, scheduler configuration, and operational conventions rather than centralized within application code. COBOL programs may rely on external mechanisms to manage partial execution, checkpointing, and reruns. These mechanisms define how systems recover from failures without manual intervention.

Incremental migration frequently focuses on application logic while overlooking these external recovery constructs. When components are migrated, equivalent restart behavior may not exist in the target environment. Distributed systems often rely on different recovery paradigms, such as stateless retries or compensating transactions.

This mismatch introduces risk. A failure that was previously recoverable through a simple rerun may now require complex manual intervention. Operations teams may discover that established procedures no longer apply, increasing downtime during incidents.

Preserving restart semantics requires identifying where recovery logic resides today and ensuring it is replicated or adapted explicitly. This task is non trivial because recovery behavior is rarely documented comprehensively. It emerges from the interaction of code, job design, and operational practice.

Error Propagation Differences Between Platforms

Error propagation behavior varies significantly between mainframe and distributed environments. In traditional mainframe systems, errors are often contained within well defined execution contexts. Return codes, condition codes, and abend handling provide structured signals that guide downstream behavior.

Distributed systems propagate errors differently. Exceptions may bubble through service layers, retries may obscure original causes, and partial failures may persist without clear signals. Incremental migration introduces hybrid execution paths where these differing semantics coexist.

Without careful management, error signals may be lost or misinterpreted as components move. A failure that once halted a batch job may now trigger retries that mask the issue. Conversely, transient distributed errors may surface as critical failures in legacy components.

Understanding and aligning error propagation is essential for preserving expected behavior. Teams must map how errors flow through execution paths today and ensure that equivalent signaling exists after migration. This challenge connects closely to issues discussed in exception handling performance impact, where error handling choices influence system behavior.

Avoiding Silent Failure Mode Changes

One of the most dangerous outcomes of incremental migration is the introduction of silent failure mode changes. These occur when systems appear to function correctly but handle failures differently than before. Such changes may not trigger immediate alarms but degrade reliability over time.

For example, a migrated component may catch and log errors that were previously propagated, preventing downstream safeguards from activating. Alternatively, a failure may be retried automatically, delaying detection until resource exhaustion occurs.

Silent changes are difficult to detect through testing because they often manifest only under specific conditions. Operations teams may not notice until incidents occur in production, at which point diagnosis is complicated by altered behavior.

Preventing silent failure mode changes requires explicit comparison of failure behavior before and after migration. Teams must validate not only that failures occur when expected, but that they are handled in equivalent ways. This validation demands deep understanding of legacy failure semantics and their counterparts in the target environment.

Maintaining Operational Runbook Validity During Migration

Operational runbooks codify how teams respond to failures. They are built around expected failure semantics, recovery steps, and system behavior. Incremental migration threatens runbook validity when failure behavior changes without corresponding updates.

As components migrate, runbooks may become partially obsolete. Procedures that once resolved issues quickly may no longer apply, leading to confusion and delayed response. In high pressure situations, reliance on outdated runbooks increases risk.

Maintaining runbook validity requires aligning operational documentation with migration phases. Teams must update procedures as failure semantics evolve, ensuring that operations staff are prepared for new behaviors. This effort is often overlooked in technical migration planning.

Effective incremental migration treats operational readiness as integral to success. Preserving failure semantics supports continuity in operations, enabling teams to respond effectively even as systems change.

Preserving failure semantics across incremental migration phases ensures that modernization does not compromise reliability. By addressing restart logic, error propagation, silent failure modes, and operational readiness, organizations can migrate confidently while maintaining the stability that mission critical systems demand.

Incremental Migration Succeeds When Behavior, Not Technology, Leads

Incremental mainframe migration across COBOL, JCL, and distributed services is often described as a technical journey, yet its success is determined by how well system behavior is understood and preserved throughout change. The most significant risks do not arise from unfamiliar platforms or modern tooling, but from hidden execution paths, fragmented data flows, and altered failure semantics that surface only after migration is underway. When these behavioral dimensions are overlooked, incremental efforts lose predictability and momentum.

Across hybrid environments, legacy systems continue to deliver value precisely because their behavior is stable and well understood in production. Incremental migration challenges this stability by introducing partial change into deeply coupled execution models. Each migration step alters timing, dependencies, or error handling in subtle ways. Without deliberate attention to these shifts, organizations find themselves compensating through operational workarounds rather than progressing toward modernization goals.

Predictable transformation emerges when incremental migration is treated as an engineering discipline rather than a sequence of isolated initiatives. This discipline prioritizes execution continuity, dependency clarity, and failure behavior equivalence over rapid extraction. Migration steps become smaller, safer, and easier to reason about. Stabilization effort decreases as lessons learned are systematically applied, enabling steady progress without repeated disruption.

For enterprises modernizing long-lived mainframe estates, incremental migration remains the most viable path forward. Its promise lies not in avoiding complexity, but in managing it deliberately. When behavioral understanding leads to architectural change, incremental migration evolves from a risk management strategy into a sustainable modernization model that preserves operational trust while enabling long-term system evolution.