Formal verification has become a defining capability for organizations responsible for operating safety critical and mission dependent systems. Modernization initiatives across aviation, financial clearing, industrial control, and public-sector platforms increasingly rely on mathematically rigorous validation to ensure that critical components behave predictably under all operational conditions. Static reasoning techniques, such as those outlined in the article on logic tracing methods, now complement formal proofs by exposing structural behaviors that specifications must accurately reflect. As system complexity scales, formal verification emerges as a strategic instrument for ensuring correctness before deployment.

Critical components rarely operate in isolation, and verification teams must account for asynchronous interactions, heterogeneous code paths, and legacy subsystems integrated with modern distributed architectures. Many of these systems contain deep control flows that are not visible without advanced analysis, similar to the understanding presented in the article on hidden code paths. These insights become essential inputs for precise formal models, enabling verification teams to capture invariants, temporal constraints, and interface assumptions that govern cross-component behavior. This alignment forms the foundation for accurate proofs across multiple runtime and platform boundaries.

Ensure Formal Correctness

Smart TS XL transforms large codebases into verification ready models that reduce risk throughout modernization.

Explore nowRegulatory frameworks place additional pressure on organizations to demonstrate correctness through deterministic evidence rather than probabilistic testing or incomplete behavioral coverage. Certification bodies in aviation, energy, medical, and financial sectors increasingly expect verification artifacts that map directly to architectural intent and documented system constraints. Guidance similar to the expectations described in SOX and DORA compliance illustrates the movement toward structured, auditable reasoning. Formal verification therefore becomes both an engineering discipline and a compliance enabler for modernization programs operating under stringent regulatory oversight.

Enterprises transitioning from tightly coupled legacy architectures to distributed cloud ecosystems or service-oriented designs face rising complexity in maintaining correctness. Subtle behavioral deviations introduced during transformation can propagate significant risk across dependent workflows, consistent with concerns identified in the analysis of logic shift detection. Formal verification offers the mathematical rigor required to evaluate these risks at scale, allowing engineering leaders to validate assumptions, expose contradictions, and ensure functional integrity throughout modernization. As a result, formal verification now plays a central role in safeguarding critical systems during architectural evolution.

Strategic Role of Formal Verification in Safety and Mission Critical Architectures

Formal verification has become foundational for enterprises operating complex, high-assurance systems where incorrect behavior produces cascading operational failures. In large organizations, mission components often span multiple technology generations, integrate with hybrid cloud platforms, and support safety-relevant workflows that demand deterministic correctness. Traditional testing validates behavior under sampled conditions, but formal verification provides mathematical guarantees that critical invariants hold across all reachable system states. This distinction becomes increasingly important as modernization introduces new integration points, concurrency models, and runtime environments that expand the potential state space. Analytical teams combine domain models, specification languages, and control-flow reasoning to create verification frameworks that evolve with the system lifecycle.

System architects also recognize that formal verification strengthens modernization governance by clarifying behavioral expectations before transformation begins. Proof artifacts establish unambiguous definitions of component responsibilities, failure conditions, and environmental assumptions. They also highlight structural issues that testing cannot reliably detect, reinforcing the role of static analysis as a prerequisite for rigorous verification. Techniques for identifying hidden path interactions, such as those discussed in detailed code path analysis, help verification teams accurately scope proofs by exposing non-obvious dependencies embedded within legacy logic. This alignment enables organizations to build modernization strategies that preserve correctness throughout architectural evolution.

Establishing Correctness Guarantees Across Heterogeneous Architectures

Critical systems frequently operate across heterogeneous platforms, including mainframes, embedded controllers, cloud services, and distributed event pipelines. Formal verification provides a unified mathematical framework to ensure correctness independent of implementation language or runtime environment. Consider a scenario in which a financial institution maintains a settlement engine written in COBOL, a risk computation service in Java, and a cloud-native orchestration tier handling asynchronous events. Without verification, subtle timing or ordering differences between these layers may expose high-impact race conditions. Formal specifications allow engineering teams to define temporal constraints, invariants, and communication protocols that apply uniformly across all components.

To validate this behavior, teams construct state-transition models that incorporate message flows, retries, persistence semantics, and timeouts. These models support temporal logic proofs guaranteeing that deadlocks, unintended reorderings, or partial updates cannot occur. Static analysis techniques help bootstrap these efforts by revealing unstructured branching or unreachable blocks that distort intended control flow. Approaches presented in discussions on logic tracing methods frequently serve as an essential precursor, ensuring formal models accurately reflect real code paths. As modernization progresses, verified properties guide refactoring, component decoupling, and architectural redesign, maintaining correctness across evolving environments.

Managing the Complexity of Failure Modes in Critical Workflows

Failure conditions in critical systems extend beyond simple exceptions to include timing deviations, partial state transitions, unavailable downstream services, or inconsistently applied configuration rules. Formal verification enables organizations to classify failure modes, assign mathematical definitions to them, and prove that recovery mechanisms behave as intended under all operational permutations. In a real-time transportation scheduling system, for example, concurrency between dispatch updates, vehicle telemetry, and constraint-driven optimization creates a combinatorial explosion of states that traditional testing cannot cover. Verification teams formalize these transitions using guarded commands or process algebra to ensure that even under degraded conditions, core invariants remain intact.

Constructing such guarantees requires an accurate understanding of how legacy logic encodes error recovery paths. Many historical systems older than twenty years maintain implicit fallback logic embedded deep within conditional structures. Using formal models without reconciling these paths risks overlooking critical behaviors. Static analysis tools reveal hidden error-handling branches, unused conditionals, or legacy exception structures that influence state transitions. This alignment allows verification teams to encode complete failure semantics into proofs. As systems evolve toward cloud-distributed architectures, additional states introduced by retries, autoscaling, and distributed consistency models can be captured in extended specifications, preserving safety guarantees throughout modernization.

Ensuring Behavioral Integrity During Incremental Modernization

Enterprises rarely replace critical systems in one phase, opting instead for incremental modernization strategies that preserve operational continuity. This staged evolution introduces uncertainty about how partially modernized components interact with legacy subsystems still performing essential functions. Formal verification provides the discipline necessary to certify behavioral integrity at each modernization milestone. For instance, when migrating a portion of a batch-driven financial reconciliation pipeline to a microservices architecture, differences in scheduling granularity or concurrency semantics may introduce nondeterministic outcomes. Through verification, engineering teams define precise behavior contracts for both legacy and modernized components, ensuring equivalence in all observable outputs.

Verification teams also rely on abstraction to maintain tractability. Legacy systems often include thousands of procedural statements that would overwhelm model checking or theorem proving if represented directly. Abstracting these components into finite models while preserving semantic correctness ensures that formal proofs remain scalable. This balance mirrors the broader modernization principle of preserving functional intent while transforming technical implementation. As modern services replace legacy routines, previously verified properties serve as regression contracts that prevent subtle deviations during refactoring, integration, or re-platforming. This disciplined pattern reduces operational risk throughout system evolution.

Using Formal Verification to Strengthen Enterprise Governance and Risk Controls

Enterprise governance frameworks increasingly emphasize rigorous, evidence-based reasoning when validating mission critical systems. Formal verification provides deterministic assurance aligned with internal risk controls and regulatory oversight. In highly regulated industries, proof artifacts become part of audit records, demonstrating that system behavior aligns with declared specifications. Techniques such as invariant preservation proofs or liveness guarantees provide regulators with measurable and reproducible evidence of correctness. This strengthens organizational defenses against operational incidents and ensures compliance with policies governing safety, resilience, and data integrity.

Moreover, governance teams benefit from structured behavioral models that formal verification produces. These models expose areas where legacy assumptions conflict with modern requirements, helping modernization boards determine when architectural redesign is necessary. Verification artifacts clarify design intent, facilitate stakeholder alignment, and reduce ambiguity during system transitions. This combination of mathematical evidence and architectural visibility provides a governance foundation resilient enough to support multi-year modernization programs spanning diverse technology stacks.

Modeling Critical Components with State Machines, Temporal Logic, and Process Algebras

Modeling serves as the foundation for formal verification, enabling engineering teams to express system behavior in mathematically rigorous constructs. Critical components in safety-relevant and mission-dependent systems require explicit representations that capture concurrency semantics, state evolution, environmental assumptions, and failure transitions. State machines, temporal logic frameworks, and process algebras support these requirements by providing structured abstractions capable of representing high-volume interaction patterns and deterministic constraints. These formalisms allow organizations to reason about correctness independent of implementation details, ensuring that modernization efforts preserve functional guarantees as codebases evolve.

A major challenge in constructing accurate models lies in reconciling deeply embedded legacy logic with modern architectural expectations. Decades-old systems often encode behavior implicitly through nested branching, shared mutable state, and side-effect-driven sequences that resist straightforward representation. Analytical teams frequently rely on intermediate static insights to guide the modeling process. Articles such as the exploration of complexity indicators provide conceptual frameworks for identifying structural hotspots that influence model fidelity. By surfacing branching structures and unbounded loops, static insights ensure that models reflect operational realities rather than simplified assumptions.

Formalizing Component State Evolution with Finite and Extended State Machines

State machine frameworks provide a disciplined mechanism to represent component behavior across discrete operational modes. In critical systems, components rarely operate in simple binary states; instead, they transition through a rich set of conditional, parameterized, or hierarchical states. For example, consider a safety interlock subsystem within an industrial automation environment. Its behavior depends not only on sensor inputs but also on supervisory commands, timing conditions, historical counters, and fault latencies. Extended state machines incorporating variables, guards, effect functions, and transition groups become essential to capture such complexity.

Verification teams construct these state machines by examining the interplay between external events and internal conditions. Legacy code often reveals numerous unstructured transitions, where branching logic embedded across multiple modules indirectly defines system states. Identifying these implicit transitions requires careful analysis of call hierarchies and persistent data dependencies. Insights from methods similar to those in the article on high-complexity detection guide modelers in identifying locations where state boundaries must be made explicit. Once formalized, state machines support invariant proofs, reachability analysis, and dead-state detection. During modernization, these verified state models serve as correctness anchors, allowing engineering teams to validate that cloud-native versions maintain the same state semantics even when execution characteristics change.

Applying Temporal Logic to Capture Ordering, Duration, and Liveness Constraints

Temporal logic plays a pivotal role in modeling timing-sensitive and order-dependent behaviors characteristic of critical systems. Specifications expressed in Linear Temporal Logic or Computational Tree Logic allow organizations to define semantic properties such as event sequencing, safety conditions, bounded reaction times, and availability requirements. Consider a payment authorization pipeline where a request must either complete within a specified timeout or transition into a controlled fallback path. Temporal logic enables architects to encode the constraint that no pending authorization may remain unresolved beyond the allowed duration.

Constructing temporal logic specifications requires deep understanding of asynchronous interactions, retries, and nondeterministic event races. Critical systems operating within distributed environments introduce additional complexity, as partial failures or message loss may violate implicit assumptions embedded in legacy logic. Static analysis techniques help identify these assumptions by highlighting data propagation anomalies or irregular branching structures. Articles describing dependency issues showcase how architectural violations can distort temporal reasoning. By aligning temporal logic constraints with identified dependencies, teams ensure that correctness conditions remain valid across heterogeneous runtimes. These specifications become essential assets during incremental modernization, enabling regression proofs that verify sustained liveness and responsiveness even after architectural transformation.

Modeling Concurrency and Communication Protocols with Process Algebras

Process algebras such as CSP, CCS, and ACP offer a mathematically disciplined way to represent concurrent execution, synchronization primitives, and communication semantics. These models become indispensable in domains such as flight control, autonomous navigation, financial clearing networks, and large-scale event-processing engines. In these environments, the behaviors of multiple interacting components cannot be characterized by independent state machines alone; instead, formal interaction structures are needed to express message channels, rendezvous conditions, and parallel operation contexts.

A scenario illustrating this challenge can be found in real-time command dispatch systems. These systems coordinate event-driven updates across multiple subsystems, each requiring precise handling of ordering and locking semantics. A minor mismatch between intended synchronization and actual code behavior may introduce deadlock risks or inconsistent state propagation. Static insights obtained from analyzing inter-procedural interactions, as discussed in impact-strengthening analysis, help reveal where implicit communication patterns exist. Process algebra models convert these patterns into formal operators such as parallel composition, hiding, and choice. This enables automated reasoning about deadlock freedom, trace refinement, and communication integrity. As legacy components transition into cloud-distributed equivalents, process algebra proofs become critical in validating that microservices preserve expected protocol semantics.

Formal Modeling as a Bridge Between Legacy Behavior and Modern Architectures

Formal modeling provides the connective structure between legacy operational intent and emerging modernization architectures. As organizations decompose monolithic systems into service-oriented or event-driven patterns, discrepancies can arise between historical assumptions and modern execution models. Scheduled batch processes may evolve into continuous data streams, tightly coupled subroutines may be restructured into asynchronous services, and synchronized operations may be replaced by distributed coordination mechanisms. These shifts change fundamental characteristics such as execution order, latency tolerance, consistency guarantees, and recovery semantics.

Modeling ensures that these differences are understood and validated before implementation. When legacy systems contain undocumented conditional flows or deeply embedded fallback structures, model construction becomes a discovery process. Insights similar to those provided in research on dynamic resilience validation expose overlooked behaviors that must be represented explicitly. Once converted into state machines, temporal logic specifications, or process algebra descriptions, teams can formally verify that modernization strategies preserve essential safety and correctness guarantees. During staged transitions, these models also act as regression oracles, enabling verification that each modernization increment respects previously validated system properties.

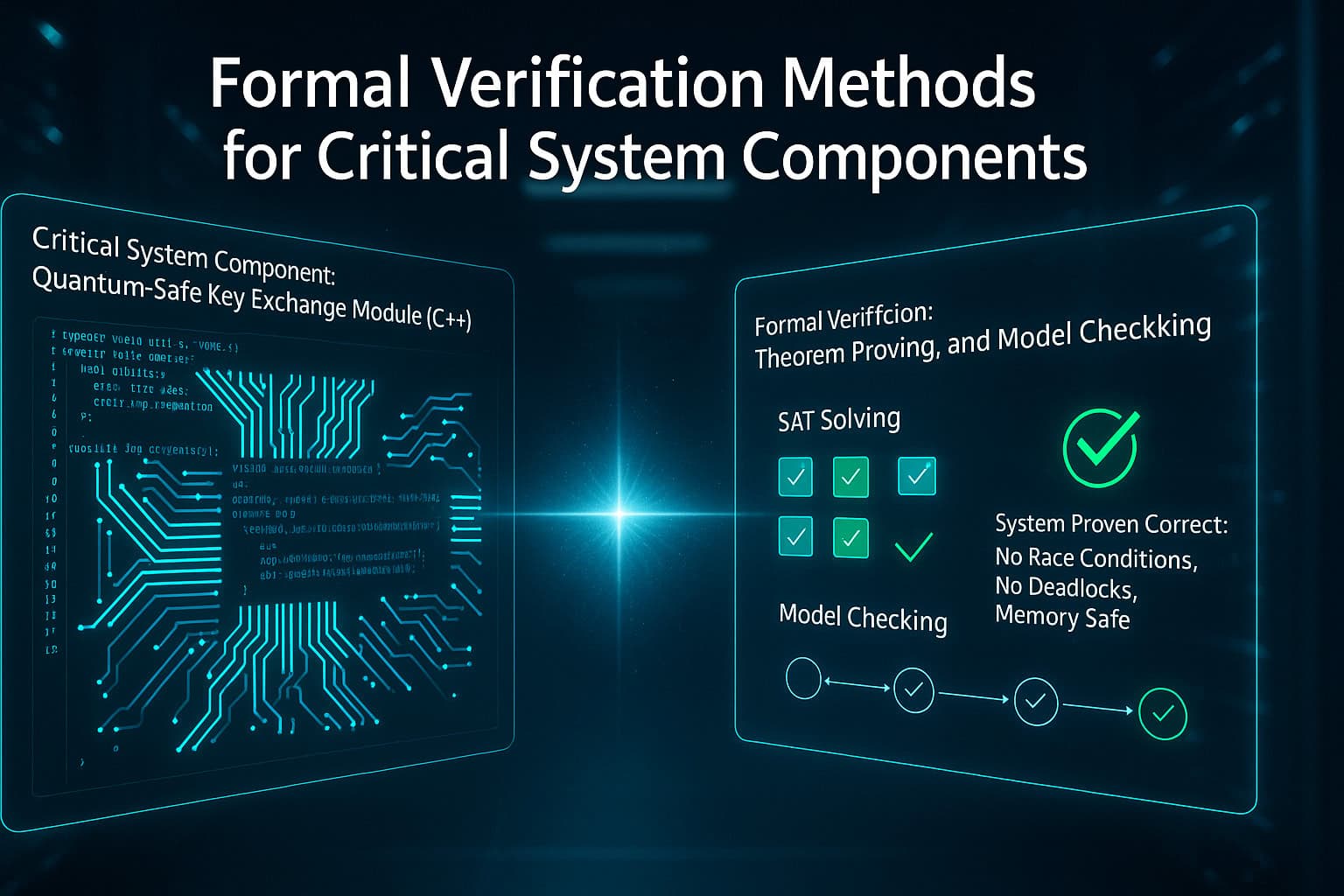

Theorem Proving Techniques for Proving Safety, Liveness, and Invariant Properties

Theorem proving provides the most expressive and rigorous foundation for validating critical system correctness. Unlike model checking, which explores state spaces automatically, theorem provers rely on structured logical reasoning to demonstrate that specified properties hold under all conditions. This capability becomes essential for large, highly parameterized systems where state spaces are too vast for automated exploration. Organizations operating safety-critical platforms depend on theorem proving to validate invariants, liveness obligations, protocol adherence, and the absence of catastrophic failure transitions. As modernization introduces new concurrency models, service orchestration patterns, or distributed dependencies, theorem proving ensures that correctness assumptions remain valid across transitional architectures.

Another advantage of theorem proving lies in its ability to verify properties of components that do not lend themselves to finite-state abstractions. Systems that incorporate unbounded data structures, recursive logic, or variable-sized datasets require deductive reasoning frameworks capable of handling general mathematical structures. Engineering teams construct formal definitions of system operations and reason inductively about all possible input and state combinations. Before doing so, analysts often use static insights to refine preconditions and derive accurate abstractions. Discussions on identifying data flow issues illustrate how legacy assumptions can propagate, influencing the formation of correct proof obligations.

Using Invariant Preservation to Guarantee Structural Safety Across Complex Flows

Invariant proofs serve as a cornerstone of deductive verification. An invariant defines a property that must hold across every system state, regardless of transitions, concurrency, or input variations. Critical systems depend on invariants to ensure structural safety, such as preventing negative account balances in financial platforms, ensuring stable actuator limits in control systems, or enforcing allowed operational ranges in medical devices. Constructing meaningful invariants requires deep handling of both explicit logic and implicit behaviors embedded within legacy codebases.

Consider a scenario involving a multi-stage claims processing workflow operating across mainframe and distributed services. Historical routines may implement cascading updates, legacy fallbacks, or conditional merges that are rarely documented. To validate safety invariants, engineers first identify core data structures and define mathematical predicates representing stable conditions, such as consistency across replicated records or monotonic progression through workflow stages. Static analysis techniques similar to those described in data consistency validation reveal procedural segments where invariants might be violated during modernization. Using a theorem prover, engineers demonstrate inductively that each transition function preserves the invariant. This approach ensures that even after migrating components to cloud-native services or reengineering data pipelines, essential safety guarantees remain intact.

Proving Liveness to Ensure Progress, Completion, and Absence of Deadlock

Liveness properties ensure that systems eventually reach desired outcomes, such as completing transactions, issuing responses, or transitioning out of transient operational states. In distributed and asynchronous systems, liveness reasoning becomes particularly challenging due to race conditions, message delays, and partial failures that can trap the system in non-progressing states. Theorem proving enables organizations to define liveness expectations explicitly and demonstrate that, under formal assumptions, the system cannot remain indefinitely stalled.

Imagine an event-driven order processing engine responsible for orchestrating multi-step workflows across several microservices. During modernization, certain services are decomposed, introducing new retry loops or compensation patterns. Without formal reasoning, progress guarantees may be compromised. Verification engineers model communication behaviors and define liveness predicates reflecting guaranteed response or resolution outcomes. Structural anomalies similar to those identified in deadlock detection studies provide insight into potential starvation or indefinite waiting behaviors. With these insights, theorem proving demonstrates that no valid execution sequence can block permanently, ensuring reliable progression even in hybrid on-premise and cloud deployments.

Parameterized Theorem Proving for Systems with Unbounded State and Data

Many enterprise platforms operate on unbounded datasets, dynamic queues, long-running sessions, or arbitrarily nested record structures. These characteristics exceed the capacity of finite-state model checking. Theorem proving offers mathematically expressive mechanisms to reason about unbounded state spaces through induction, coinduction, and higher-order logic. This becomes crucial for industries such as finance, telecommunications, and aerospace, where system correctness must hold regardless of data scale, operational duration, or input variability.

Consider a telecommunications billing system that maintains millions of concurrent sessions with dynamic lifecycle patterns. Legacy designs may implement recursive processing routines that must guarantee accuracy regardless of scale. Parameterized theorem proving enables analysts to define generalized behavioral rules independent of session count. Before constructing proofs, engineering teams often analyze structural patterns to locate areas where unbounded recursion or iteration occurs. Articles such as the examination of impact-driven behavior illustrate how legacy complexity must be understood before abstraction. With an accurate specification, theorem provers validate correctness for all possible system sizes, providing strong assurance during modernization, load scaling, or migration to elastic cloud infrastructure.

Encoding Failure Logic, Error Recovery, and Environmental Assumptions Into Proof Obligations

Failure handling plays a critical role in verification, particularly for systems that must maintain safe behavior in adverse or degraded environments. Theorem proving enables analysts to encode assumptions about failure modes, error propagation, fallback routines, and external system guarantees. This ensures that proofs remain valid even when components experience intermittent outages, configuration inconsistencies, or resource contention. Modern architectures amplify these concerns due to distributed communication, autoscaling, and heterogeneous processors introducing new categories of partial failures.

Take the case of a cross-platform claims adjudication system undergoing phased modernization. Some components run on legacy batch engines, others on event-driven cloud services. Failure semantics differ across these environments, potentially invalidating earlier assumptions about error propagation. Engineers define precise preconditions capturing acceptable failure behaviors, then construct proofs demonstrating that system-level safety properties remain intact under these conditions. Insights from studies on preventing cascading failures help identify edge-case transitions requiring explicit formal treatment. Embedding these into proof obligations ensures that modernization does not compromise resilience or correctness, even when failure behaviors shift due to architectural change.

Model Checking Workflows for Embedded, Real Time, and Distributed Control Systems

Model checking provides exhaustive, automated exploration of system states, allowing verification teams to identify violations of safety, liveness, or protocol correctness without constructing manual proofs. For embedded controllers, real time platforms, and distributed orchestration systems, model checking becomes essential due to the high density of interacting states and timing dependencies. These environments often rely on concurrent processes, interrupt-driven transitions, and deterministic scheduling requirements. Model checkers evaluate these dynamics by systematically exploring all reachable configurations under varying event orderings and environmental conditions. As enterprises modernize these mission-critical systems, model checking ensures behavioral consistency across legacy subsystems and emerging distributed components.

Another strength of model checking lies in its ability to reveal subtle inconsistencies that are not apparent through testing or simulation. Real time constraints, clock drift, communication retries, and asynchronous message arrivals create execution paths that traditional validation rarely exercises. Legacy codebases, particularly those structured across decades, may contain deeply nested conditionals, implicit fallback transitions, or timing assumptions tied to older hardware. Analytical findings from sources such as the study of control flow complexity illustrate how compounded structural patterns influence verification results. By aligning model checking with these insights, organizations build accurate abstractions that reflect real operational conditions.

Exhaustive State Exploration in Embedded Control Loops

Embedded systems in aerospace, automotive safety, industrial automation, and robotics depend on precise control loops operating within strict timing and safety boundaries. Model checking allows engineers to model control cycles, interrupts, sensor sampling, actuator commands, and fallback routines with high fidelity. A representative scenario might involve a flight-control module governing attitude adjustments based on sensor fusion inputs. The controller must guarantee safety properties such as bounded oscillation, monotonic actuator convergence, or invalid-state avoidance. Embedded loops often interact with hardware-level fault indicators, watchdog timers, and error-correction subsystems, making the complete state space significantly larger than expected.

Model checking workflows begin by defining a structured state model that incorporates both functional and timing characteristics. This may include clock variables, input ranges, hysteresis effects, and fault conditions. Legacy implementations typically reveal undocumented transitions linked to performance optimizations or hardware constraints. Analysis techniques similar to those described in latency-sensitive pattern detection highlight areas where implicit delays or synchronous assumptions influence behavior. Once the state model is established, engineers apply bounded or unbounded exploration to validate properties such as stability, error propagation limits, and recovery behavior. During modernization, especially when migrating embedded logic to hardware abstraction layers or software-defined platforms, model checking ensures that timing and safety constraints remain preserved across updated execution engines.

Real Time Scheduling Models and Deadline Verification

Real time systems depend on predictable scheduling guarantees, where tasks must execute within specified deadlines to maintain system integrity. These environments include autonomous navigation systems, medical infusion controllers, factory robotics, and emergency dispatch platforms. Model checking enables verification teams to evaluate scheduling policies, preemption rules, priority hierarchies, and clock synchronization mechanisms under all possible timing variations. Real time violations such as deadline misses, jitter amplification, or priority inversion can produce catastrophic operational failures.

A scenario illustrating this concern involves an autonomous vehicle subsystem that must process sensor data, evaluate trajectories, and dispatch actuator commands within fixed cycles. When modernizing such a system for cloud-assisted features or additional compute layers, scheduling constraints may shift in subtle ways. Verification engineers construct timed automata or hybrid state models that represent each task, its deadline, and its interaction with system clocks. Analytical work on throughput versus responsiveness provides guidance for identifying areas where timing contention or load spikes influence scheduling reliability. Model checkers explore all task sequences, evaluating whether deadlines hold across worst-case ordering, message delays, or resource contention. This approach ensures that modernization does not introduce latent timing defects and that safety and operational guarantees remain consistent across heterogeneous execution environments.

Distributed System Behavior, Consensus, and Message Ordering Verification

Distributed systems amplify verification complexity by introducing nondeterministic message ordering, variable latency, network partitions, and scale-dependent interactions. Model checking becomes an essential instrument for verifying consensus algorithms, distributed coordination logic, and multi-node recovery protocols. Financial transaction networks, energy grid management systems, and national-scale communication infrastructures depend on these guarantees to avoid data corruption, inconsistent state updates, or cascading outages.

For example, consider a distributed asset-tracking platform coordinating updates across multiple geographic regions. Legacy versions may rely on synchronous calls, while modernized variants incorporate asynchronous messaging, queue-based delivery, or gossip protocols. Verification engineers construct models capturing message loss, delay, duplication, and temporary partitioning. Insights from research into fault injection analysis help define conditions under which distributed components must preserve safety properties. Model checking evaluates whether consensus holds, whether liveness persists during network instability, and whether replicated states remain consistent across all nodes. As systems migrate into cloud or multi-region environments, these checks ensure operational continuity regardless of scale, latency, or topology shifts.

Detecting Subtle Interleavings and Partial Order Violations Introduced During Modernization

Modernization frequently changes concurrency patterns, introducing new event sequences or eliminating serialized workflows that once guaranteed correctness. These transformations can generate partial order violations, unexpected interleavings, or race conditions that were previously impossible. Model checking provides the granular visibility required to detect these issues before deployment. Teams construct models reflecting both legacy and modernized concurrency structures and compare behavior through refinement checking, trace equivalence, or counterexample analysis.

Consider a global payment settlement platform historically driven by batch updates. During modernization, settlement logic is decomposed into microservices operating asynchronously. While this transition improves scalability, it also introduces new timing and ordering combinations. Static insights similar to those provided in actor-based flow integrity reveal areas where data propagation semantics may shift. By applying model checking, engineers detect cases where partial updates propagate inconsistently or where asynchronous retries reorder events beyond acceptable constraints. As modernization advances, these verifications ensure that distributed behavior conforms to intended design semantics and that newly introduced concurrency does not compromise correctness or regulatory compliance.

Abstract Interpretation and Static Analysis as a Bridge to Full Formal Verification

Abstract interpretation provides the mathematical foundation needed to approximate dynamic behavior without executing code, making it a critical precursor to formal verification in safety-sensitive systems. Its lattice-based semantics allow organizations to model variable ranges, control-flow constraints, and data-propagation characteristics at scale, especially in legacy environments with tens of millions of lines of code. By constructing sound over-approximations of all feasible execution paths, abstract interpretation identifies invariants, impossible states, and stability properties that theorem proving and model checking later rely on. This alignment becomes indispensable when modernizing distributed, mission-critical systems containing complex data dependencies and undocumented workflows.

Static analysis complements abstract interpretation by delivering structural insights that clarify where formal models must focus. Legacy architectures frequently contain deeply nested conditionals, recursive flows, environmental assumptions, or platform-specific behaviors that formal verification cannot incorporate without accurate abstraction. Analytical methods such as multi-procedural flow analysis, dependency resolution, and data-flow tracing reveal hidden side effects or state mutations essential for formalization. Explorations into topics such as impact analysis patterns illustrate how organizational understanding of execution drivers informs more accurate proof obligations. When integrated strategically, static analysis and abstract interpretation form a pipeline that transforms complex codebases into verifiable specifications with mathematical precision.

Deriving Sound Over-Approximations for Large and Heterogeneous Codebases

Large enterprise systems contain code spanning multiple paradigms, decades, and operational domains. Abstract interpretation is uniquely positioned to unify this diversity by building semantic approximations that remain valid regardless of implementation specifics. A global financial clearing system, for example, might include COBOL settlement logic, Java orchestration services, Python analytics modules, and real-time messaging infrastructure. Each introduces unique behaviors, but formal verification requires a consistent semantic model. Abstract interpretation achieves this by mapping all constructs into unified domains intervals, octagons, symbolic constraints, or relational abstractions that generalize behavior while preserving soundness.

Constructing these abstractions requires careful handling of loops, dynamic structures, and inter-procedural flows. Legacy systems often use nested loops with evolving state variables tied to business rules encoded across procedural layers. To prevent under-approximation, analysts compute fixpoints that represent stable equilibrium conditions for all possible executions. Static analysis findings from areas such as scalable dependency mapping highlight where abstraction boundaries must be adjusted to capture indirect state transitions. Once over-approximations converge, they serve as the backbone for invariant generation, state-machine construction, and subsequent deductive or automated verification. During modernization, these approximations ensure new implementations maintain the full behavioral envelope required for correctness guarantees.

Extracting Implicit Invariants and Behavioral Constraints Hidden in Legacy Logic

Legacy applications often encode correctness constraints implicitly rather than through explicit documentation or design contracts. These invariants may reside in variable usage conventions, loop termination structures, fallback paths, or error-recovery logic embedded over decades of incremental development. Abstract interpretation reveals these hidden invariants by analyzing stable properties across all possible paths. For instance, in a national benefits processing system, constraints ensuring non-negative balances, monotonic state progressions, or allowable field combinations may never be explicitly stated yet hold true across millions of historical executions. Formal verification cannot proceed reliably without capturing these properties.

To surface them, analysts evaluate abstract states across loops, branches, and module boundaries. Because invariants often emerge from repeated convergence of abstract states, identification requires global reasoning rather than local inspection. Studies examining data propagation anomalies show how subtle field interactions can distort correctness if omitted from models. Once extracted, invariants are formalized as predicates in theorem proving environments or as properties in model checking frameworks. These constraints then become formal guarantees that must hold across modernization activities such as data-schema migration, service decoupling, or distributed execution. As modernization progresses, extracted invariants serve as regression contracts preserving historical correctness under new architectures.

Using Abstract Interpretation to Identify Verification Boundaries and Model Reduction Points

Formal verification requires well-defined boundaries; proving an entire enterprise system monolithically is neither tractable nor necessary. Abstract interpretation identifies natural partitions that support modular verification. For example, an energy grid control platform may consist of forecasting modules, sensor-input filters, regulator algorithms, and dispatch logic. Although all interact, not every interaction is relevant to every proof obligation. Abstract interpretation helps isolate semantic regions where behavior stabilizes or risks propagate, enabling verification engineers to determine which subsystems require deep proof and which can remain abstract.

This boundary identification relies heavily on analyzing interdependencies, state-sharing patterns, and mutation propagation chains. Insights from topics such as dependency-driven modernization illustrate how structural simplification supports stronger reasoning. By identifying areas of controlled side effects or deterministic transitions, analysts construct reduced formal models suitable for theorem proving or model checking. These reductions drastically improve verification performance by eliminating irrelevant state variables or execution paths. During modernization, model reduction ensures that newly introduced architectural features such as asynchronous messaging or streaming pipelines do not invalidate assumptions necessary for sound reasoning.

Connecting Abstract Semantics to Executable Proof Obligations in Modern Verification Tools

Once abstractions stabilize, they must be translated into concrete proof obligations for formal verification engines. This translation includes generating inductive invariants, framing preconditions, defining permissible state transitions, and constructing behavioral contracts that model checkers or theorem provers can evaluate. This step forms the bridge between static reasoning and mathematical verification. For instance, a telecommunications routing engine undergoing modernization may rely on constraints ensuring that no routing table becomes empty during failover. Abstract interpretation identifies the conditions under which such states become reachable. Verification teams then encode these conditions into temporal logic or inductive reasoning frameworks to ensure that failover logic behaves as intended across all network conditions.

Static insights provide critical context when forming these obligations. Explorations into pattern tracing methodologies demonstrate how operational sequences shape verification requirements. By aligning abstract semantics with these execution patterns, the resulting proof obligations maintain fidelity to real system behavior. As modernization introduces new architectural abstractions, verification teams regenerate obligations incrementally, ensuring that emerging system variations remain consistent with historically validated correctness conditions. This ensures formal verification remains a continuous, architecture-aligned discipline rather than a one-time exercise.

Contract Based Design and Assume Guarantee Reasoning for Complex System Interfaces

Contract based design provides a rigorous method for defining the exact behavioral expectations of critical system components. In high assurance and modernization sensitive environments, components rarely operate in isolation. Instead, their correct behavior depends on the guarantees provided by upstream and downstream modules. Contracts capture these relationships as formalized assumptions and guarantees that define how components must behave under every permissible condition. These contracts become the foundation for systematic verification because they transform loosely defined requirements into precise logical specifications. As distributed architectures and service oriented designs replace monolithic systems, contract based design becomes essential for maintaining predictable operational behavior.

Assume guarantee reasoning allows verification teams to decompose large systems into manageable subsets. Instead of proving properties for the entire system at once, each component is verified independently using its contract. The global system is correct if all contracts remain mutually consistent. This compositional reasoning is especially important in modernization initiatives because legacy components often contain implicit assumptions that differ from those expected in modernized services. Analytical work related to cross platform consistency demonstrates how mismatches introduced during modernization can propagate subtle errors if interface assumptions are not formalized. Contract based design prevents these inconsistencies by enforcing clear and verifiable behavioral boundaries.

Defining Precise Interface Responsibilities Across Heterogeneous Components

Critical systems frequently involve heterogeneous components that differ in timing models, state semantics, error handling conventions, and message formats. Contract based design provides a structured approach to define responsibilities across these boundaries. Consider a modernization program migrating a claims adjudication module from a mainframe batch process into an event driven microservice. The legacy component assumes that records arrive in sorted order and that retries occur through scheduled batch reruns. The modernized component, however, may receive unordered asynchronous events with varying levels of partial completion. Without explicit interface contracts, misalignment between expectations produces inconsistent state updates or silent data divergence.

Verification engineers begin by documenting the preconditions that the receiving service assumes, such as data ordering constraints or valid field combinations. They then define guarantees such as monotonic record updates or bounded response times. Insights from analyses of schema evolution impact often guide the discovery of hidden conventions. Once contracts are established, engineers verify that each component satisfies its guarantees when its assumptions hold. This process ensures architectural integrity even as modernization modifies execution topology, scheduling semantics, or deployment environments. Contracts also serve as regression artifacts that ensure future enhancements do not silently violate established behavioral boundaries.

Compositional Verification for Large Scale Modernization Programs

Assume guarantee reasoning enables verification at scale by decomposing large system proof obligations into smaller verifiable units. This is especially relevant for enterprises modernizing systems with millions of lines of code across multiple platforms. Attempting to reason about such systems monolithically is computationally infeasible. Compositional reasoning solves this by verifying each component under explicitly stated assumptions. These local proofs are then composed to infer system level correctness.

A transportation routing system provides a useful scenario. Legacy modules calculate optimal routes using deterministic algorithms. Modernized microservices introduce parallel path exploration, asynchronous messaging, and distributed data caches. Without structured decomposition, verifying end to end routing correctness becomes intractable. Verification teams define contracts that capture required behaviors such as consistency of routing updates or availability of geospatial indexes. Studies related to impact analysis for modernization highlight how legacy assumptions often remain implicit. Once contracts clarify these responsibilities, each component is verified independently, making the overall reasoning process tractable. As modernization proceeds in phases, compositional verification ensures that newly introduced services preserve correctness even before the full migration completes.

Handling Uncertain and Variable Environmental Conditions in Distributed Systems

Distributed systems operate under variable conditions that affect latency, throughput, ordering, and fault behavior. Contract based design accommodates these uncertainties by formalizing environmental assumptions that must hold for system guarantees to remain valid. For instance, a payment orchestration system may assume upper bounds on message delays, minimum consistency guarantees from storage services, or predictable retry behavior from dependent microservices. These assumptions become part of the contract and allow verification teams to determine precisely when guarantees apply.

When modernizing such systems, environmental characteristics often change. Migration to cloud regions introduces additional network variance. Replacement of synchronous database calls with asynchronous queues shifts ordering semantics. Analytical insights from concurrent execution behaviors reveal how environmental changes influence component logic. Contracts incorporate these dependencies to ensure correctness across varying runtime conditions. Verification teams then use assume guarantee reasoning to prove that even under worst case but permissible scenarios, global properties such as liveness, data coherence, and idempotency remain intact. By explicitly documenting environmental assumptions, enterprises avoid accidental regression during architecture transitions.

Ensuring Behavioral Stability During Incremental and Hybrid Deployments

Modernization rarely happens in a single transformation. Instead, organizations operate hybrid architectures where legacy components and modernized services coexist. Contract based design helps maintain stability during these transitional states by specifying the exact behavioral interfaces that must hold before integration. Consider a global logistics system where tracking updates originally flowed through centralized mainframe processing. Migration introduces distributed processing nodes and region specific services. Failure to document interface assumptions produces inconsistent updates or out of order state transitions.

Verification teams establish precise contracts that describe required properties such as ordering guarantees, event completeness, and validation logic. Analytical findings related to dominant dependency risks can reveal areas where subtle structural changes produce unexpected behavior. Assume guarantee reasoning allows teams to verify correctness locally before components are integrated into hybrid deployments. As modernization progresses, each new component is validated within the context of the evolving contractual framework. This staged validation ensures that the system preserves global behavioral properties even as individual modules change their implementation details or execution environments.

Integrating Formal Methods into CI CD DevSecOps and Assurance Pipelines

Integrating formal verification into enterprise delivery pipelines requires a shift from isolated correctness checks to continuous, automation aligned reasoning. Safety critical and modernization driven systems operate in environments where changes occur frequently, often across distributed teams and hybrid architectures. Without continuous verification, even minor updates risk altering behavior in ways that violate previously validated assumptions. Organizations therefore incorporate theorem proving, model checking, and contract based validation into CI and CD workflows to ensure that correctness expectations remain synchronized with evolving codebases. This integration bridges development, quality engineering, and architectural governance.

DevSecOps practices strengthen this alignment by embedding security and correctness responsibilities throughout the pipeline. Formal methods enhance these responsibilities by identifying structural risks that automated testing cannot detect. The introduction of cloud based services, microservice boundaries, and event driven patterns increases surface area for defects that arise from concurrency, ordering, or interface misalignment. Studies such as the examination of CI CD analysis integration highlight how automated reasoning supports both security and modernization objectives. By tying formal verification checks to each commit, build, or deployment stage, organizations transform correctness into a continuous and enforceable discipline.

Embedding Model Checking and Property Verification into Build Pipelines

Model checking integrates effectively into CI CD workflows because it can run automatically after each code change, validating that safety, liveness, and ordering properties remain intact. This is especially important in large scale modernization initiatives where components are gradually rewritten or replatformed. Consider an enterprise risk calculation engine being migrated from a batch driven mainframe architecture to a distributed microservice topology. Even small changes in message routing, scheduling intervals, or data validation steps can introduce new execution paths that violate expected invariants.

Verification teams configure model checking stages within the pipeline to trigger on each merge or deployment. These stages generate state models, apply abstraction rules, and evaluate properties using bounded or unbounded search strategies. Analytical work on regression risk detection provides insight into identifying performance and correctness regressions that surface only under specific timing or load conditions. Model checking complements these methods by ensuring that structural and logical conditions hold across all possible execution traces. During modernization, each successful check confirms that incremental transformations do not compromise established correctness guarantees. Failures produce counterexample traces that guide developers in correcting issues before they reach production.

Using Symbolic Reasoning to Detect Subtle Logic Deviations Across Rapid Iterations

Symbolic reasoning tools allow pipelines to detect logic deviations that bypass conventional testing. These tools evaluate code paths by representing variables and system states symbolically rather than concretely. This approach reveals structural deviations introduced during refactoring, replatforming, or interface redesign. A representative scenario involves an enterprise payment authorization module undergoing staged modernization. Legacy logic includes implicit fallback behavior that only triggers under rare timing conditions. When the module is reimplemented as an asynchronous service, symbolic analysis identifies differences in how failure paths propagate.

When integrated into CI CD workflows, symbolic reasoning captures these deviations during early pipeline stages. Engineers define symbolic properties such as normalization conditions, ordering requirements, or invariant preservation obligations. Static insights from work on automated code review patterns demonstrate how static and symbolic reasoning collaborate to surface hidden issues. Symbolic reasoning engines run within the pipeline to compare behavior before and after each change. This process ensures that modernization does not introduce subtle but high impact logic errors. As systems evolve toward distributed patterns, symbolic checks help maintain equivalence between legacy behavior and modern implementation semantics.

Incorporating Contract Validation into DevSecOps Security Gates

As modernization multiplies system interfaces, contract based design becomes essential for verifying that components behave consistently across environments. DevSecOps pipelines incorporate contract validation gates that evaluate whether components satisfy defined assumptions and guarantees. These gates prevent incompatible changes from progressing upstream. For instance, in a national healthcare information system, referral routing services rely on strict ordering and validation constraints. If modernization alters message formats, encoding rules, or ordering semantics, the absence of contract validation allows erroneous updates to propagate system wide.

Contract validation tools analyze incoming changes by checking whether revised components maintain required behavioral guarantees. They also validate that environmental assumptions remain satisfied given downstream dependencies. Insights from research on search driven impact validation illustrate how understanding transitional dependencies informs contract definition. During pipeline execution, contract validators block deployments that violate correctness boundaries and provide actionable diagnostics. This ensures that modernization proceeds safely, even when teams work in parallel across multiple components and execution environments.

Establishing Assurance Evidence Through Continuous Formal Reasoning

Formal verification provides assurance evidence required for safety certification, regulatory compliance, and modernization governance. Integrating this evidence into CI CD and DevSecOps pipelines transforms assurance from a periodic activity into a continuous process. Each proof artifact, model checking trace, or contract validation record becomes part of an auditable history that documents system correctness across time. For example, a biometric authentication platform supporting public sector services might require demonstrable evidence that all updates preserve liveness guarantees, data integrity, and failure recovery semantics.

Pipelines automatically store these artifacts and associate them with build identifiers, deployment events, and architectural changes. This ensures that compliance teams can trace correctness obligations through every modernization stage. Analytical work on critical failure mapping helps organizations understand how deviations propagate, supporting stronger assurance arguments. By embedding formal methods into pipeline governance, enterprises maintain operational reliability even as systems evolve. This continuous record of verification shapes long term modernization strategy by identifying stable components, fragile areas, and emerging risk vectors.

Scaling Formal Verification Across Legacy, Heterogeneous, and Polyglot Codebases

Scaling formal verification requires organizations to move beyond isolated proofs and adopt systematic strategies capable of handling enterprise level codebases with long operational histories. Legacy systems often span multiple languages, data formats, and execution models, creating verification landscapes that differ significantly from modern, modular architectures. These systems include batch programs, event driven components, domain specific languages, and embedded business rules woven through decades of incremental change. Verification teams must therefore unify diverse semantics under a coherent modeling and reasoning framework. The challenge intensifies when modernization proceeds in parallel, since both legacy and modern code must be verified concurrently. Analytical perspectives on application integration design show how heterogeneous infrastructures complicate cross component reasoning. Formal verification succeeds only when this complexity is accounted for through scalable abstraction and modularization.

Polyglot systems further complicate verification by introducing languages with different typing rules, concurrency semantics, error handling conventions, and runtime characteristics. In many enterprises, decades of investment have produced ecosystems where COBOL, Java, Python, SQL, and proprietary scripting coexist. Ensuring correctness across such environments requires verification strategies that generalize behavior without losing the precision needed for liveness, safety, and ordering guarantees. Insights from research on dependency graph analysis demonstrate how structural mapping reveals hidden cross language interactions that must be incorporated into formal models. As organizations modernize these polyglot landscapes into distributed or cloud native architectures, scalable verification becomes essential for preventing regressions and preserving operational integrity.

Harmonizing Semantics Across Multiple Languages and Execution Paradigms

A key difficulty in verifying polyglot systems lies in reconciling disparate language semantics into a unified abstraction. For example, a legacy insurance processing platform may include COBOL batch programs, Java middleware, JavaScript front end logic, and Python analytics extensions. Each language exhibits unique semantics for concurrency, exception handling, state mutation, and memory management. Formal verification demands consistent abstraction across these features so that models accurately reflect the end to end system behavior.

To achieve this, verification teams construct semantic profiles for each language, identifying constructs that influence control flow, state transitions, and error propagation. These profiles form the basis for language neutral models such as extended state machines or symbolic relational structures. Analytical work on mixed technology modernization clarifies how cross language dependencies evolve during modernization. For example, replacing synchronous COBOL routines with asynchronous microservices alters communication semantics that must be reflected in formal models. Verification teams use symbolic reasoning, abstract interpretation, and interface contracts to harmonize behavior. Once unified semantics are established, theorem provers and model checkers operate across a single coherent model, enabling scalable, end to end validation of correctness properties.

Partitioning Large Codebases into Verification Ready Modules

Large systems must be decomposed into verification ready segments to remain tractable. Attempting to model and verify an entire monolithic application at once results in intractable state explosion and unmanageable proof obligations. Effective scaling requires partitioning based on architectural boundaries, data ownership, execution phases, or dependency hierarchies. Consider a global manufacturing control system with thousands of interacting programs. Some components manage sensor ingestion, others coordinate material handling, while predictive modules operate asynchronously on statistical models. Verification teams must identify natural verification boundaries that isolate stable behavioral units.

Static insights from the study of failure propagation risk reveal where dependencies are tightly coupled and where modular decomposition is safe. With this information, engineers partition the codebase into modules that can be verified independently under well defined assumptions. Each module receives its own state model, invariants, and temporal guarantees. When the modules are reassembled into a global system, assume guarantee reasoning ensures the correctness of the entire architecture. This approach allows verification to scale linearly with system size, enabling practical adoption across multi million line codebases undergoing modernization.

Integrating Formal Models with Real Operational Telemetry to Guide Verification Scope

Operational telemetry provides valuable insights that help verification teams determine which behaviors are critical to model and prove. Legacy systems often contain dormant code paths, obsolete features, or rarely triggered error states that inflate model complexity without improving verification value. Telemetry helps identify the most frequently used paths, highest risk interactions, and recurrent anomalies. For instance, a retail transaction engine may exhibit rare concurrency spikes or occasional retry storms under high seasonal load. Telemetry identifies these conditions so that verification models incorporate relevant behaviors while safely abstracting away unreachable or low value paths.

Studies on telemetry guided impact analysis demonstrate how real behavioral data refines modernization planning. Verification teams apply similar techniques by correlating telemetry insights with formal models. For example, if telemetry identifies a recurring deadlock pattern under specific data distributions, formal models incorporate these states and evaluate them rigorously. Conversely, if telemetry indicates that a legacy fallback path has not executed for years due to superseded business logic, the path may be abstracted. This synergy ensures that verification remains focused, scalable, and aligned with real operational risks during modernization.

Ensuring Verification Continuity Across Hybrid Legacy Modern Environments

Modernization introduces hybrid environments where legacy components operate alongside modern microservices, cloud platforms, and event driven architectures. Ensuring verification continuity across these mixed topologies is one of the most challenging aspects of enterprise scale formal reasoning. Each environment imposes different timing rules, communication mechanisms, and consistency guarantees. A system that once operated on predictable batch cycles may now rely on asynchronous events, distributed caches, and autoscaling behaviors that introduce nondeterminism.

Verification teams construct bridge models that unify legacy semantics with modern runtime characteristics. Analytical studies on risk reduction through dependency simplification show how simplifying dependencies improves system resilience. Similar insights inform verification boundaries by identifying where modernization changes introduce new timing or ordering conditions. Formal models then combine legacy constraints such as deterministic file reads with modern constructs like eventual consistency or asynchronous message arrival. This hybrid modeling ensures that verification remains valid across transitional stages. As modernization progresses, verified models evolve iteratively, preserving correctness guarantees even when execution environments change dramatically.

Certification, Compliance, and Audit Trails with Formal Evidence for Critical Systems

Certification frameworks for aviation, defense, energy, finance, and public infrastructure demand deterministic evidence that critical systems behave correctly under all authorized conditions. Traditional testing offers partial coverage that cannot satisfy these strict assurance requirements. Formal verification fills this gap by providing mathematically grounded guarantees that safety and liveness properties hold across all reachable states. As modernization transforms legacy systems into distributed or service oriented architectures, certification bodies increasingly expect high precision evidence that demonstrates functional equivalence with previously validated behavior. This shift reflects a broader industry trend in which correctness must be continuously demonstrated rather than periodically reexamined.

Compliance regimes impose additional responsibilities by requiring organizations to track and document how correctness obligations evolve over time. Regulations often mandate proof artifacts showing exactly how system updates, refactoring decisions, or architectural transitions affect operational behavior. Without these artifacts, organizations risk audit gaps or certification delays. The ability to generate persistent, traceable evidence becomes especially important during modernization where legacy assumptions, interface contracts, and operational constraints change rapidly. Analytical guidance from studies of governance oversight in modernization illustrates how structured documentation supports long term system governance. Formal verification extends this structure into the correctness domain by producing audit ready artifacts that support compliance across the system lifecycle.

Demonstrating Safety Properties for Industry Certification Standards

Safety certification requires proof that systems satisfy critical invariants such as bounded outputs, monotonic state transitions, or the absence of unsafe states. Industries such as aviation and medical device manufacturing impose rigorous standards that demand evidence of safety properties under all allowed conditions. For example, a flight management subsystem must guarantee that certain control commands do not produce oscillatory or divergent behavior. Legacy implementations often rely on assumed invariants that were never formally documented. During modernization, these assumptions may no longer hold due to changes in execution timing, message distribution, or scheduling semantics.

Formal verification provides mathematical guarantees that safety invariants remain consistent across transformed architectures. Verification teams construct detailed models that capture system dynamics, environmental constraints, and failure modes. They then use theorem proving or model checking to validate that safety properties remain intact. Analytical perspectives from the study of critical system decomposition help teams discover implicit assumptions that must be accounted for in safety models. Certification bodies can review the resulting proof artifacts, which include invariant definitions, proof steps, and counterexample analyses. This level of rigor ensures that modernization does not compromise safety guarantees and that newly deployed architectures remain certifiable under existing regulatory regimes.

Building Compliance Ready Documentation from Formal Methods Artifacts

Compliance frameworks require organizations to maintain detailed documentation that demonstrates how each system update affects operational behavior. This documentation must remain internally consistent across versions and traceable to source changes. Formal verification produces structured artifacts such as invariant definitions, reduction arguments, liveness proofs, and trace checking results that support these documentation requirements. By capturing these artifacts within verification management systems, organizations create persistent records that auditors can examine without reconstructing analysis from scratch.

Consider a financial transaction clearing platform undergoing a transition from monolithic batch logic to distributed transaction processing. Compliance teams must demonstrate that data integrity, transaction atomicity, and authorization flows have not been compromised. Insights from the analysis of integrity assurance show how structured reasoning frameworks disclose failure semantics that influence documentation quality. Formal artifacts allow organizations to map each update to specific correctness checks, including whether invariants were revalidated and whether any deviations emerged during model checking. These artifacts become part of a continuous audit trail that supports compliance assessments during and after modernization.

Maintaining Traceability from Requirements to Proof Obligations

Regulatory agencies increasingly expect traceability between system requirements, specifications, and verification artifacts. This requirement ensures that proofs directly correspond to stated obligations and that no assumptions or exceptions are unaccounted for. Traceability is especially important in modernization because legacy requirements often differ from those of modern architectures. For example, a legacy batch requirement that processing completes in fixed time windows may become irrelevant in an event driven architecture, yet its safety implications may persist in other forms.

Verification teams construct traceability matrices linking requirements to specific proof obligations. Studies on requirement dependent modernization highlight how mismatches between legacy and modern requirements produce subtle errors. Formal models, invariants, and temporal logic conditions provide the structure for mapping each requirement to a verification step. Proof tools generate explicit evidence for each mapping, including inductive proof steps, counterexample searches, and failure analyses. This level of traceability supports not only regulatory review but also internal architecture governance, ensuring that modernization does not introduce unvalidated assumptions.

Producing Machine Verifiable Evidence for Auditors and Certification Boards

Auditors and certification agencies require evidence that is both human interpretable and machine verifiable. Machine verifiable evidence reduces ambiguity by ensuring that proofs can be replayed for independent validation. Modern verification tools generate replay logs, proof certificates, counterexample traces, and satisfiability results that become part of the compliance record. For example, a national identity verification system may require proof that authentication state transitions remain consistent under high concurrency. Machine verifiable artifacts demonstrate precisely how these guarantees hold for all possible inputs.

Analytical work on system wide failure tracing illustrates the importance of rigorous examination of operational paths. Verification teams incorporate these findings into formal models and generate machine verifiable proof artifacts. These artifacts include encoded invariants, temporal specifications, and logic constraints. Auditors can replay these proofs to validate results without reexamining the model manually. This approach strengthens the integrity of certification processes and provides organizations with defensible evidence that their modernization programs maintain compliance and operational reliability.

How Smart TS XL Accelerates Formal Reasoning on Large Critical Codebases

Smart TS XL enhances formal verification workflows by providing structural visibility, semantic extraction, and dependency analysis at a scale that traditional tooling cannot achieve. Critical systems often consist of millions of lines of legacy code accumulated over decades of layer by layer modifications. These systems contain undocumented assumptions, deeply embedded transitions, and cross module dependencies that complicate formal modeling. Smart TS XL surfaces this information through automated impact analysis, inter procedural mapping, and code visualization, enabling verification teams to construct accurate specifications faster and with significantly reduced manual effort. This acceleration is essential for modernization programs that operate under strict timelines and regulatory expectations.

Smart TS XL also strengthens the correctness pipeline by integrating seamlessly into DevSecOps environments. It identifies areas of architectural drift, potential failure propagation, hidden code paths, and cyclic dependencies that would complicate formal proofs if left undiscovered. These insights ensure that theorem proving, model checking, and contract validation target the right abstractions at the right boundaries. Analytical approaches such as those referenced in the discussion of static code visualization illustrate how structured insights provide a foundation for formal reasoning. Smart TS XL elevates this capability by delivering automated, high fidelity system maps suitable for direct use in verification workflows.

Accelerating Model Construction Through Automated Dependency and Control Flow Discovery

Model construction represents one of the most time intensive components of formal verification. Smart TS XL reduces this burden by extracting end to end control flow structures, dependency graphs, state transitions, and variable propagation chains from large and heterogeneous systems. Consider a financial transaction processing platform that integrates COBOL batch logic with distributed Java event handlers. Constructing state machine or temporal logic models manually would require extensive domain knowledge and deep traversal of legacy codebases. Smart TS XL automatically uncovers these relationships, presenting them as navigable dependency structures.

These visualizations become foundational for creating accurate formal models. Insights derived from analytical approaches related to full control flow mapping show how deeply hidden transitions influence system correctness. Smart TS XL exposes such transitions at scale, enabling verification engineers to construct precise invariants, liveness conditions, and failure models. By providing clean partitions of functional domains, Smart TS XL ensures that formal verification focuses on architecturally significant boundaries rather than noise introduced by incidental code behavior. This improves both the accuracy and efficiency of model construction across modernization cycles.

Enhancing Proof Obligations with Traceable Semantic and Data Flow Structures

Formal verification requires detailed traceability between system semantics and proof obligations. Smart TS XL provides this through comprehensive semantic extraction and data flow mapping. Legacy systems typically contain implicit data transformations, fallback logic, and state mutation patterns that are difficult to reconstruct manually. When these semantics are unclear, formal proofs risk becoming unsound or incomplete. Smart TS XL eliminates this ambiguity by generating explicit maps of variable lifetimes, mutation sites, and inter procedural data dependencies.

These insights support rigorous proof obligation construction. Analytical research into data driven reasoning highlights the importance of understanding transformation semantics during modernization. Smart TS XL enhances this understanding by revealing hidden aliases, dormant code paths, and branching dependencies that influence verification boundaries. Armed with these insights, theorem provers and model checkers can be configured with precise assumptions and invariants. As a result, proof artifacts become more accurate, easier to validate, and more resilient to architectural change during modernization.

Improving Modernization Readiness with Automated Impact Analysis and Boundary Identification

One of the most challenging aspects of formal verification in modernization programs lies in determining where verification boundaries should be placed. Poor boundary selection leads to unmanageable proof obligations or incomplete reasoning. Smart TS XL provides automated impact analysis that identifies natural system partitions based on dependency strength, invocation patterns, and data coupling metrics. For example, in a logistics optimization engine, certain modules may influence only localized routing functions while others govern high risk global behaviors.

Insights from organizational studies on impact driven modernization demonstrate how understanding dependency structures informs safe transformation decisions. Smart TS XL extends this capability by producing automated impact reports that highlight which modules require deep formal analysis and which can be abstracted. These reports reduce manual triage overhead and ensure that verification efforts align with modernization priorities. As modernization progresses, Smart TS XL continuously updates these partitions, ensuring that formal verification remains synchronized with evolving system architectures.

Enabling Continuous Verification Through Integration with CI CD and Governance Systems